The magic behind AI code generation

Have you ever wondered how AI tools like GitHub Copilot or ChatGPT can seemingly conjure lines of code out of thin air? It feels like magic, but it’s actually a sophisticated dance of algorithms, data, and predictive power. At TechDecoded, we’re all about demystifying complex tech, and today we’re pulling back the curtain on how artificial intelligence generates code.

From simple autocomplete suggestions to crafting entire functions, AI is rapidly transforming the way we build software. Understanding its inner workings isn’t just for developers; it’s for anyone curious about the future of technology and how these powerful tools are built. Let’s dive in!

The foundation: large language models (LLMs)

At the heart of most modern AI code generation lies a technology you’re probably already familiar with: Large Language Models (LLMs). These are the same types of AI that power chatbots and content generators. But how do they go from understanding human language to writing functional code?

- Vast training data: LLMs are trained on enormous datasets that include not just human text, but also vast repositories of publicly available code from sources like GitHub. This allows them to learn patterns, syntax, and common programming idioms across many languages.

- Pattern recognition: Through this training, the AI learns to recognize statistical relationships between different parts of code and natural language descriptions. It doesn’t ‘understand’ code in a human sense, but it becomes incredibly adept at predicting the next most probable token (word or code snippet) based on the input it receives.

How AI “understands” code

When we say AI “understands” code, it’s a bit of a simplification. What it actually does is process and represent code in a way that allows it to make predictions. Here’s a simplified breakdown:

- Tokenization: Just like natural language, code is broken down into ‘tokens’. These can be keywords (

if,for), operators (+,=), variable names, or even punctuation. - Embeddings: Each token is then converted into a numerical representation called an ’embedding’. These embeddings capture the semantic and syntactic meaning of the token in a high-dimensional space. Tokens with similar meanings or contexts will have embeddings that are numerically close to each other.

- Contextual learning: The AI uses complex neural network architectures, particularly transformers, to process sequences of these embeddings. This allows it to understand the context of the code it’s seeing – not just individual tokens, but how they relate to each other in a larger structure, like a function or a class.

The generation process: prompt to prediction

So, you give an AI a prompt – maybe a comment describing what a function should do, or the start of a code block. What happens next?

- Encoding the prompt: The AI takes your natural language prompt and any existing code, tokenizes it, and converts it into embeddings.

- Predicting the next token: Using its learned patterns, the AI predicts the most statistically probable next token to follow the input. This isn’t a random guess; it’s an informed prediction based on billions of examples it has seen.

- Iterative generation: This predicted token is then added to the input, and the process repeats. The AI continuously predicts the next token, building out the code line by line, or even character by character, until it reaches a logical stopping point (e.g., the end of a function, or a specific instruction from the user).

- Refinement and selection: Often, the AI generates several possible continuations and uses internal scoring mechanisms or further user interaction to select the most suitable one.

Beyond simple suggestions: advanced applications

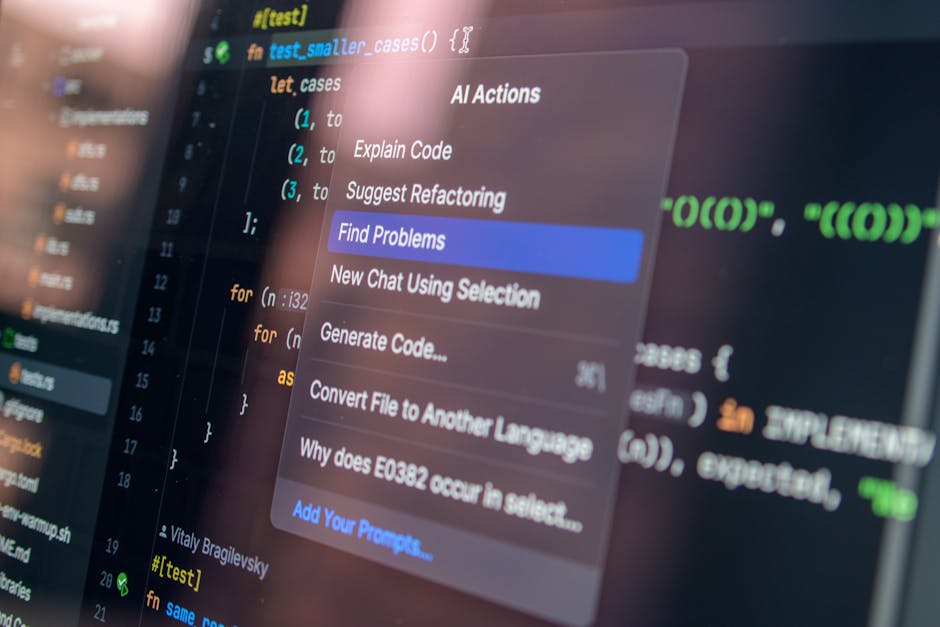

AI’s role in code generation extends far beyond just suggesting the next line. It’s evolving to handle more complex tasks:

- Code completion and generation: From filling in variable names to generating entire functions based on a docstring.

- Debugging and error fixing: AI can analyze error messages and suggest potential fixes or even refactor problematic code sections.

- Code translation: Converting code from one programming language to another.

- Test case generation: Creating unit tests for existing code to ensure its functionality.

- Refactoring and optimization: Suggesting ways to improve code readability, performance, or adherence to best practices.

Challenges and limitations of AI code generation

While incredibly powerful, AI code generation isn’t without its hurdles:

- Accuracy and correctness: AI-generated code isn’t always perfect. It can contain bugs, security vulnerabilities, or simply not work as intended, requiring human review and correction.

- Contextual understanding: LLMs can struggle with highly specific or novel requirements that deviate significantly from their training data. They lack true understanding of the problem domain.

- Creativity and innovation: AI excels at pattern matching, but it’s less adept at truly novel problem-solving or inventing entirely new algorithms.

- Bias and security: If trained on flawed or biased code, the AI can perpetuate those issues. Security vulnerabilities present in training data can also be replicated.

- Over-reliance: There’s a risk of developers becoming overly reliant on AI, potentially hindering their own problem-solving skills or understanding of fundamental concepts.

Navigating the future of code with AI

AI code generation is not about replacing human developers, but augmenting their capabilities. It’s a powerful co-pilot that can handle repetitive tasks, accelerate development cycles, and free up human creativity for more complex, strategic challenges. As these models continue to evolve, we can expect even more sophisticated assistance, leading to higher productivity and potentially new paradigms in software development.

The key lies in understanding how to effectively collaborate with AI, leveraging its strengths while being aware of its limitations. For developers, this means honing their skills in prompt engineering, critical code review, and understanding the underlying principles of the generated code. For everyone else, it means appreciating the intricate dance of data and algorithms that makes this technological marvel possible.

Leave a Comment