The invisible hand: AI’s growing reach into our lives

Artificial intelligence is no longer a futuristic concept; it’s woven into the fabric of our daily existence. From personalized recommendations on streaming services to smart assistants managing our homes, AI enhances convenience and efficiency. However, this pervasive integration comes with a significant trade-off: our privacy. As AI systems become more sophisticated and data-hungry, understanding the potential privacy risks is crucial for anyone navigating the modern digital landscape.

At TechDecoded, we believe in demystifying technology. Today, we’re diving deep into the privacy implications of AI, breaking down how these powerful tools can inadvertently expose or misuse our personal information, and what we can do about it.

The insatiable appetite: why AI needs so much data

The very foundation of powerful AI lies in vast quantities of data. Machine learning models learn by identifying patterns in massive datasets, whether it’s images, text, audio, or behavioral information. The more data an AI system processes, the ‘smarter’ it becomes, leading to better predictions, more accurate classifications, and more personalized experiences.

This data often comes from:

- Direct user input: What you type, say, or upload.

- Behavioral tracking: Your clicks, browsing history, app usage, and location data.

- Sensors: Data from smart devices, cameras, microphones, and wearables.

- Third-party sources: Data purchased or shared from other companies.

While this data fuels innovation, it also creates a massive reservoir of personal information, making it a prime target for privacy breaches and misuse.

Anonymity’s illusion: the re-identification risk

Many organizations claim to ‘anonymize’ data before using it for AI training or analysis. The idea is to strip away personally identifiable information (PII) like names, addresses, or social security numbers. However, research has repeatedly shown that true anonymity is incredibly difficult, if not impossible, to achieve with large datasets.

Even seemingly innocuous pieces of information, when combined, can act as unique identifiers. For instance, a person’s zip code, birth date, and gender can often uniquely identify them in a large dataset. This process, known as ‘re-identification,’ allows individuals to be linked back to their supposedly anonymous data, exposing sensitive details about their lives.

Algorithmic bias: when AI makes unfair judgments

AI systems are only as unbiased as the data they’re trained on. If a dataset reflects existing societal biases – for example, historical discrimination in lending, hiring, or law enforcement – the AI model will learn and perpetuate those biases. This can lead to privacy infringements and discriminatory outcomes.

- Credit scoring: An AI system trained on biased historical data might unfairly deny loans to certain demographic groups.

- Hiring decisions: AI tools used for resume screening could inadvertently filter out qualified candidates based on patterns learned from past biased hiring practices.

- Facial recognition: Systems trained predominantly on one demographic might perform poorly or misidentify individuals from other groups, leading to false accusations or privacy violations.

These biases, often hidden deep within complex algorithms, can lead to unfair treatment and a loss of privacy for affected individuals, impacting their opportunities and reputation.

The watchful eye: AI in surveillance and monitoring

AI-powered surveillance is becoming increasingly prevalent, from smart city initiatives to workplace monitoring. Facial recognition, gait analysis, and sentiment analysis technologies are being deployed in public spaces and private environments, raising significant privacy concerns.

While proponents argue these technologies enhance security, critics point to the potential for mass surveillance, erosion of civil liberties, and the creation of comprehensive digital profiles without explicit consent. Imagine a world where every public interaction, every purchase, and every movement is tracked and analyzed by AI, creating a permanent record that could be used for purposes far beyond its original intent.

Fortress under siege: data breaches and security vulnerabilities

The more data an organization collects and processes for AI, the larger its ‘attack surface’ becomes. AI systems often require access to vast, diverse, and sometimes highly sensitive datasets. If these datasets are compromised through a cyberattack or internal breach, the privacy implications can be catastrophic.

A breach involving an AI training dataset could expose millions of personal records, including financial details, health information, or even biometric data. The sheer volume and sensitivity of data involved in AI operations make them particularly attractive targets for malicious actors, posing a constant threat to individual privacy.

Navigating the future: protecting your privacy in an AI world

As AI continues to evolve, so too must our approach to privacy. Protecting our personal information in an AI-driven world requires a multi-faceted strategy involving individuals, developers, and policymakers.

- For individuals: Be mindful of the data you share online and with smart devices. Understand privacy policies, use strong passwords, and regularly review your privacy settings. Advocate for stronger data protection.

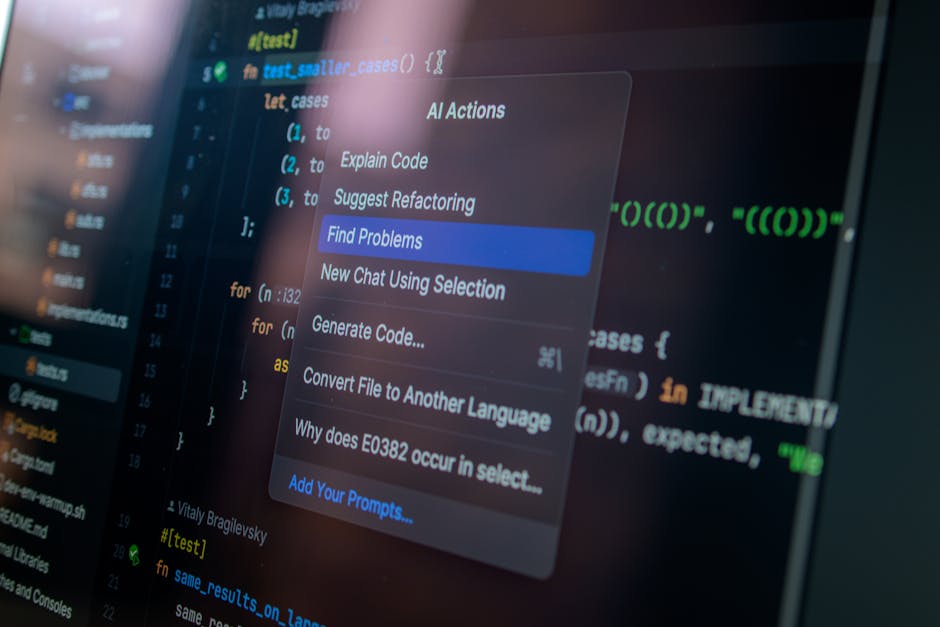

- For AI developers and organizations: Prioritize ‘privacy by design,’ embedding privacy considerations from the outset of AI development. Implement robust data anonymization techniques, conduct regular security audits, and ensure transparency in data collection and usage.

- For policymakers: Develop comprehensive regulations that balance innovation with strong privacy protections, ensuring accountability for AI systems and providing individuals with greater control over their data.

The journey towards a future where AI benefits humanity without compromising our fundamental right to privacy is ongoing. By understanding the risks and actively participating in the conversation, we can collectively shape a more secure and privacy-respecting AI landscape.

Leave a Comment