The paradox of AI: brilliant yet bafflingly naive

Artificial intelligence has achieved incredible feats. From beating grandmasters in chess and Go to generating stunning art and complex code, AI systems often seem to possess superhuman capabilities. Yet, ask a sophisticated AI if a fish can ride a bicycle, or if pouring water into a sieve will fill it, and you’ll quickly hit a wall. This fundamental gap reveals one of AI’s most persistent and perplexing challenges: the lack of common sense.

At TechDecoded, we aim to demystify technology. Today, we’re diving into why AI, despite its computational prowess, still struggles with the intuitive understanding of the world that even a toddler possesses.

What is common sense, anyway?

Before we dissect AI’s shortcomings, let’s define what we mean by “common sense.” For humans, common sense isn’t a set of explicit rules we’re taught; it’s an implicit, intuitive understanding of how the world works. It encompasses:

- Basic physics: Objects fall down, not up. Solid objects can’t pass through each other.

- Object permanence: Things continue to exist even when out of sight.

- Causality: Understanding cause and effect (e.g., if you push a glass off a table, it will break).

- Social norms: Unwritten rules about how people interact.

- Contextual understanding: Interpreting situations based on broader knowledge.

This vast reservoir of background knowledge allows us to navigate novel situations, make inferences, and understand implications without needing every single detail explicitly stated.

AI’s reliance on data, not understanding

The core reason AI lacks common sense lies in its fundamental learning paradigm. Most modern AI, especially deep learning, operates by identifying patterns in vast datasets. It excels at correlation, not causation. When an AI identifies a cat, it’s not because it “understands” what a cat is in a biological or experiential sense. Instead, it has learned to recognize specific pixel patterns, shapes, and textures that frequently appear together in images labeled “cat.”

This means AI doesn’t build a mental model of the world. It doesn’t infer that cats are animals, have fur, or typically don’t drive cars. It simply processes the data it has been trained on. If its training data never shows a cat driving a car, it won’t generate that idea, nor will it understand why it’s absurd if prompted.

The problem of context and causality

Humans constantly infer and predict based on context and an understanding of cause and effect. If you see a wet street, you infer it probably rained. If you see someone stumble, you anticipate they might fall. AI struggles profoundly with this.

For an AI, every scenario is largely independent unless explicitly linked in its training data. It doesn’t inherently grasp “why” things happen. It can predict that if you type “What is the capital of France?”, the answer is “Paris” because it has seen that pattern countless times. But it doesn’t understand the geopolitical reasons or historical context behind Paris being the capital. This makes it brittle when faced with situations outside its training distribution or requiring genuine causal reasoning.

Lack of embodied experience

A significant portion of human common sense is forged through embodied experience – interacting with the physical world through our senses and bodies. We learn about gravity by falling, about texture by touching, about space by moving through it. Our brains are wired to process sensory input and build a rich, multi-modal understanding of reality.

AI, conversely, lives in a digital realm. It processes numbers, not sensations. It doesn’t feel the weight of an object, the heat of a flame, or the resistance of a door. This lack of direct, physical interaction with the environment deprives AI of a crucial foundation for developing intuitive common sense.

The frame problem and symbolic reasoning

The “frame problem” is a classic challenge in AI, highlighting its difficulty in distinguishing relevant information from irrelevant. When you move a cup from a table, you intuitively know that the table itself, the room, the city, and the universe haven’t moved. Only the cup’s position has changed. For an AI, explicitly stating what *hasn’t* changed in every scenario is computationally overwhelming.

Traditional symbolic AI attempted to encode common sense as explicit rules, but this proved impossible due to the sheer volume and complexity of human knowledge. Modern neural networks avoid explicit rules but still struggle with the implicit filtering and contextual understanding that humans effortlessly apply.

Bridging the gap: current research and future directions

Researchers are actively working to imbue AI with common sense. Some promising avenues include:

- Neuro-symbolic AI: Combining the pattern recognition power of neural networks with the logical reasoning capabilities of symbolic AI.

- Reinforcement learning with world models: Training AI agents to build internal representations (models) of their environment, allowing them to predict outcomes and plan actions more effectively.

- Large language models (LLMs) and emergent abilities: While not true common sense, the vast scale of LLMs sometimes leads to “emergent” reasoning capabilities that can mimic common sense in certain contexts, though often superficially.

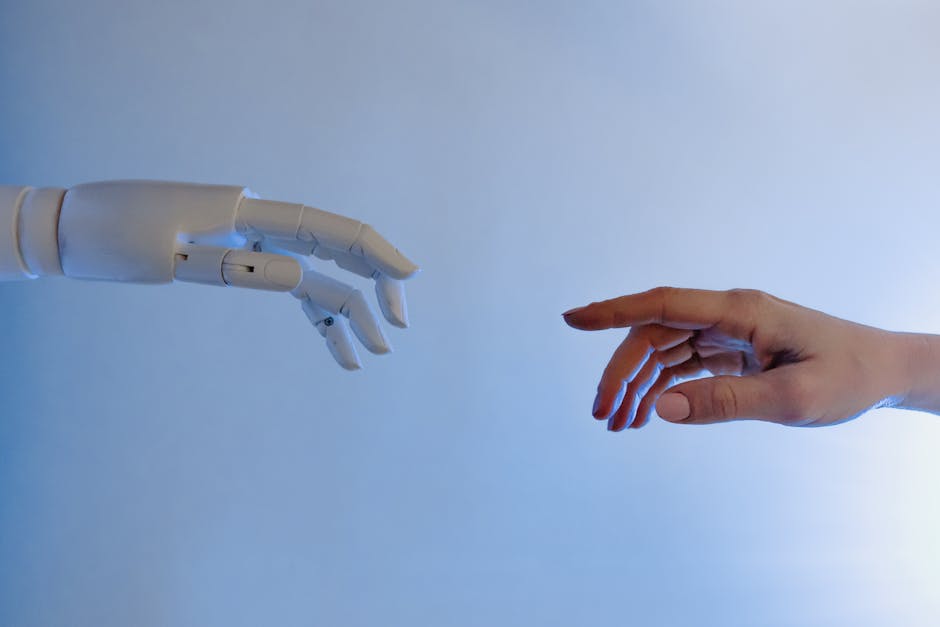

- Embodied AI: Developing AI systems that learn through physical interaction with the world, often through robotics.

These approaches aim to move AI beyond mere pattern matching towards a more profound, human-like understanding of the world.

Navigating AI’s limitations in the real world

Understanding AI’s common sense deficit isn’t about dismissing its power; it’s about using it wisely. For us at TechDecoded, this means:

- Leverage AI for its strengths: AI excels at data analysis, pattern recognition, automation of repetitive tasks, and generating creative content based on existing styles.

- Recognize its boundaries: Don’t expect AI to make nuanced judgments, handle truly novel situations requiring deep understanding, or replace human intuition in critical decision-making.

- Human oversight is crucial: Always keep a human in the loop, especially for applications where errors could have significant consequences.

- Focus on practical applications: Use AI as a powerful tool to augment human capabilities, not to replicate human consciousness.

While true common sense AI remains a distant goal, acknowledging its current limitations allows us to build more robust, reliable, and ultimately more useful AI systems for the present and near future.

Leave a Comment