The allure of AGI and its speculative shadow

Artificial General Intelligence (AGI) – the concept of AI possessing human-level cognitive abilities, capable of learning, understanding, and applying intelligence across a wide range of tasks – often dominates headlines and fuels both utopian dreams and dystopian fears. From science fiction blockbusters to serious academic discussions, the arrival of AGI is frequently presented as an inevitable, perhaps imminent, event. However, when we strip away the hype and delve into the current state of AI research, it becomes clear that many predictions about AGI are, at best, educated guesses, and more often, pure speculation.

Defining the elusive: what AGI truly means

Before we can predict its arrival, we need a clearer understanding of what AGI entails. Unlike the narrow AI we use today – systems designed to perform specific tasks like playing chess, recognizing faces, or generating text – AGI would theoretically possess common sense, creativity, and the ability to learn and adapt to novel situations without explicit programming. It would be a universal learner, capable of mastering any intellectual task a human can. The challenge? We don’t fully understand the mechanisms of human intelligence ourselves, making it incredibly difficult to define, let alone engineer, its artificial counterpart.

The chasm between narrow AI and general intelligence

Current AI, no matter how impressive, operates within predefined domains. Large Language Models (LLMs) like ChatGPT can generate remarkably coherent text, but they don’t ‘understand’ in the human sense; they predict the next most probable word based on vast datasets. Image recognition systems can identify objects with incredible accuracy, but they lack the contextual understanding of a child. These systems are powerful tools, but they are specialists, not generalists.

- Task-specific excellence: Modern AI excels at narrow, well-defined problems.

- Lack of common sense: AI struggles with intuitive understanding of the physical world or social dynamics.

- Limited transfer learning: An AI trained to play Go cannot suddenly write a novel or diagnose a medical condition without extensive retraining.

- Absence of true creativity: While AI can generate novel combinations, it doesn’t originate concepts from first principles or experience.

The leap from these highly specialized systems to a truly general intelligence is not merely an incremental step; it represents a fundamental paradigm shift that current research hasn’t yet illuminated.

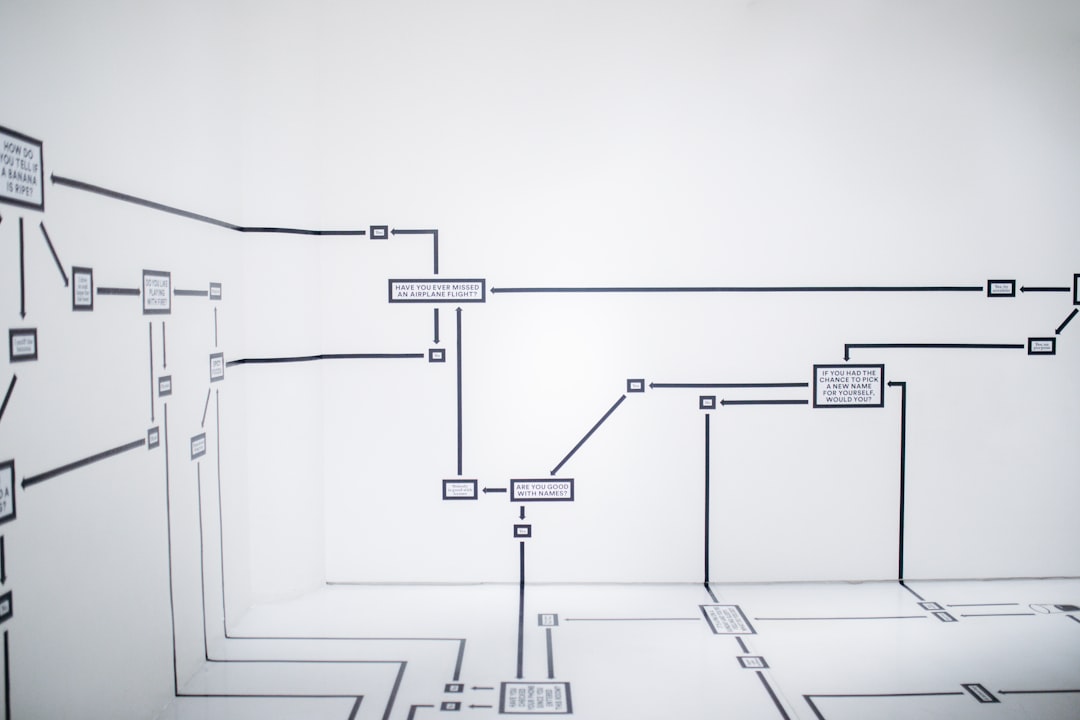

The unpredictable nature of emergent complexity

One of the core reasons AGI predictions are so speculative is the inherent unpredictability of emergent complexity. Intelligence, especially human-level intelligence, is an emergent property of incredibly complex biological systems. We don’t have a clear roadmap for how simple neural networks could scale or combine to produce consciousness, self-awareness, or general reasoning.

- Unknown unknowns: We might be missing fundamental theoretical breakthroughs required for AGI.

- Scaling isn’t enough: Simply adding more data or computational power to current models might hit diminishing returns or fundamental architectural limits.

- Defining progress: Without a clear understanding of what AGI looks like, it’s hard to measure progress towards it.

Predicting the exact timeline for such a profound and poorly understood phenomenon is akin to predicting the weather on an alien planet – we lack the foundational models and data.

The human tendency to anthropomorphize and sensationalize

Our fascination with AGI is deeply rooted in human psychology. We tend to anthropomorphize technology, projecting human qualities onto machines, especially those that mimic human communication or problem-solving. This, coupled with media’s drive for sensationalism, often inflates the capabilities of current AI and accelerates timelines for AGI.

The narratives around AGI often swing between extreme optimism (a utopian future where AI solves all problems) and extreme pessimism (AI enslaving humanity). Both extremes, while compelling, distract from the practical realities of AI development and the more immediate ethical and societal challenges posed by *current* powerful narrow AI systems.

Navigating the future of intelligence with grounded insight

While the pursuit of AGI is a fascinating long-term scientific endeavor, it’s crucial for us to maintain a grounded perspective. The most impactful work in AI today lies in understanding, developing, and responsibly deploying the powerful narrow AI tools we already possess. Focusing too heavily on speculative AGI timelines can divert attention and resources from addressing the tangible benefits and risks of current AI.

At TechDecoded, we believe in demystifying technology. This means acknowledging the exciting potential of future AI while also critically evaluating the claims and predictions surrounding it. By understanding the limitations and the true nature of current AI, we can better prepare for a future where technology genuinely serves humanity, whether or not AGI ever fully materializes.

Leave a Comment