Introduction: Beyond the training data

Imagine you’ve taught a child to identify cats by showing them hundreds of pictures of different felines. Now, if that child sees a cat they’ve never encountered before – perhaps a different breed, in a new setting, or from an unusual angle – and can still correctly identify it as a cat, they’ve demonstrated a powerful ability: generalization. In the world of artificial intelligence, this concept is just as vital, if not more so.

Model generalization is the cornerstone of truly intelligent AI. It’s the ability of an AI model to perform well and make accurate predictions or classifications on data it has never seen during its training phase. Without strong generalization, an AI model is little more than a sophisticated parrot, repeating what it has learned without true understanding. For AI to be useful in the real world, it must be able to adapt and apply its knowledge to new, unforeseen situations.

What exactly is model generalization?

At its core, model generalization refers to how well an AI model can extend its learned patterns from the training data to new, unseen data. Think of it as the difference between memorizing answers for a test and truly understanding the underlying concepts. A model that generalizes well has learned the fundamental relationships and features within the data, rather than just memorizing specific examples.

When an AI model is trained, it’s fed a large dataset. During this process, it adjusts its internal parameters to minimize errors on this training data. However, the ultimate goal isn’t just to perform perfectly on the data it has seen, but to make accurate predictions when faced with completely new inputs. If a model only performs well on its training data but fails on new data, it hasn’t generalized effectively.

The enemy of generalization: Overfitting and underfitting

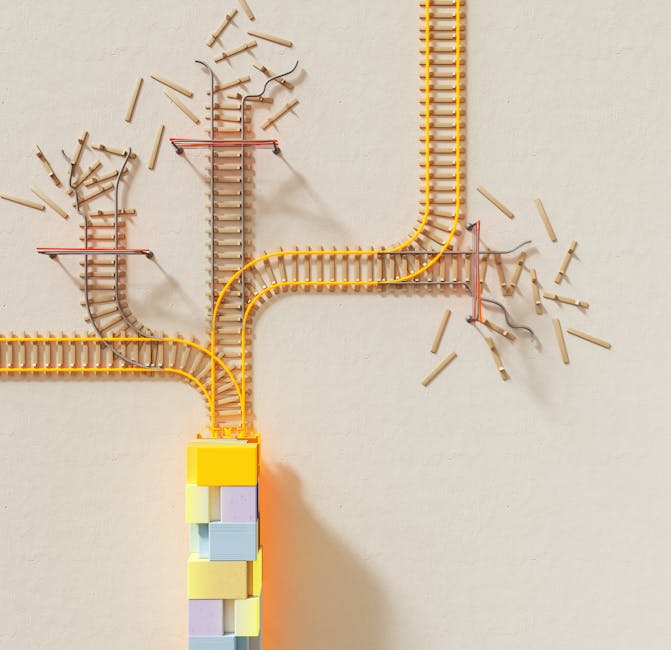

Two common pitfalls can severely hinder a model’s ability to generalize:

- Overfitting: This occurs when a model learns the training data too well, to the point where it starts to memorize noise and specific details rather than the underlying patterns. An overfit model will perform exceptionally on its training data but poorly on new, unseen data because it has essentially “memorized” the answers instead of understanding the problem. It’s like a student who memorizes every example problem but can’t solve a slightly different one.

- Underfitting: On the other end of the spectrum, underfitting happens when a model is too simple to capture the underlying patterns in the training data. It hasn’t learned enough from the data to make accurate predictions, even on the training set. An underfit model performs poorly on both training and unseen data, much like a student who hasn’t grasped the basic concepts at all.

How do we measure generalization?

Measuring generalization is critical to building effective AI. This is typically done by splitting your available data into distinct sets:

- Training set: The largest portion of data, used to train the model.

- Validation set: A separate dataset used during training to tune hyperparameters and prevent overfitting. It helps monitor the model’s performance on unseen data as it learns.

- Test set: A completely independent dataset, held back until the very end. This set is used to evaluate the final model’s performance and provide an unbiased estimate of its generalization ability. The model should never see this data during training or validation.

By comparing the model’s performance (e.g., accuracy, precision, recall, F1-score) on the training set versus the test set, we can gauge its generalization. A significant drop in performance on the test set compared to the training set is a strong indicator of poor generalization, often due to overfitting.

Strategies to improve model generalization

Data scientists and AI engineers employ various techniques to encourage better generalization:

- More and diverse data: The more varied and representative the training data, the better the model can learn robust patterns. Data augmentation techniques (e.g., rotating images, adding noise) can artificially expand the dataset.

- Regularization: These techniques add a penalty to the model’s loss function for complexity, discouraging it from becoming too intricate and memorizing noise. Common types include L1 (Lasso) and L2 (Ridge) regularization.

- Cross-validation: A robust evaluation technique where the training data is split into multiple folds. The model is trained and validated multiple times, using different folds for validation each time, providing a more reliable estimate of generalization.

- Early stopping: During training, the model’s performance on the validation set is monitored. Training is stopped when the validation error starts to increase, even if the training error is still decreasing, preventing overfitting.

- Feature engineering and selection: Carefully choosing and transforming relevant features can help the model focus on the most important information, reducing noise and improving generalization.

Real-world impact: Why generalization matters

The ability to generalize is what makes AI truly valuable across countless applications:

- Self-driving cars: A car’s AI must generalize to recognize new obstacles, road conditions, and traffic scenarios it hasn’t explicitly encountered during training.

- Medical diagnosis: AI models for disease detection need to generalize to identify conditions in new patients with varying symptoms, demographics, and medical histories.

- Spam detection: An effective spam filter must generalize to identify new, evolving forms of spam that weren’t present in its initial training data.

- Natural language processing: Language models must generalize to understand and generate text in contexts and styles they haven’t seen before.

Building robust AI for a dynamic world

In a world where data is constantly changing and new challenges emerge daily, building AI models that can generalize is paramount. It’s the difference between a brittle system that breaks at the slightest deviation and a resilient one that can adapt and provide consistent value.

Focusing on generalization means moving beyond mere performance metrics on known data. It’s about striving for models that truly understand the underlying principles, making them trustworthy, reliable, and genuinely intelligent. As we continue to integrate AI into every facet of our lives, our ability to foster and measure strong generalization will dictate the success and impact of these powerful technologies.

Leave a Comment