What is AI bias? Unpacking the hidden unfairness

Artificial intelligence is rapidly transforming our world, from recommending movies to powering medical diagnoses. While AI promises efficiency and innovation, it’s not immune to flaws. One of the most critical challenges facing AI today is bias – a systematic and repeatable error in a computer system’s predictions that creates unfair outcomes, often disproportionately affecting certain groups of people. At TechDecoded, we believe understanding these complexities is the first step toward building a more equitable technological future.

Imagine an AI system designed to be objective, yet it consistently makes decisions that disadvantage women, minorities, or specific age groups. This isn’t a glitch; it’s AI bias at play. It’s a reflection of the world it learns from, and unfortunately, our world isn’t always fair.

Where does AI bias come from? The roots of unfairness

AI systems learn from data, and if that data is skewed, incomplete, or reflects existing societal prejudices, the AI will inherit and amplify those biases. Understanding the sources is crucial:

-

Biased training data

This is the most common culprit. If an AI is trained on historical data that contains human biases – for example, a hiring dataset where men were historically preferred for certain roles – the AI will learn to associate those preferences with success. It doesn’t invent bias; it mirrors the patterns it observes.

-

Human bias in design and labeling

The people who design, develop, and label data for AI systems can inadvertently introduce their own biases. For instance, if image recognition software is primarily trained by people who label images of brides as only women, the system might struggle to identify a man in a wedding dress.

-

Algorithmic design flaws

Sometimes, the very structure or objective function of an algorithm can inadvertently lead to biased outcomes. If an algorithm optimizes for a metric that is itself correlated with a biased outcome, it can perpetuate unfairness even with seemingly neutral data.

-

Feedback loops

AI systems often operate in a loop. If a biased AI makes a decision (e.g., denying a loan), that decision can then influence future data (e.g., the person denied a loan has a harder time building credit), further entrenching the bias in subsequent training cycles.

Real-world examples of AI bias in action

AI bias isn’t just a theoretical problem; it has tangible impacts on people’s lives. Here are a few prominent examples:

-

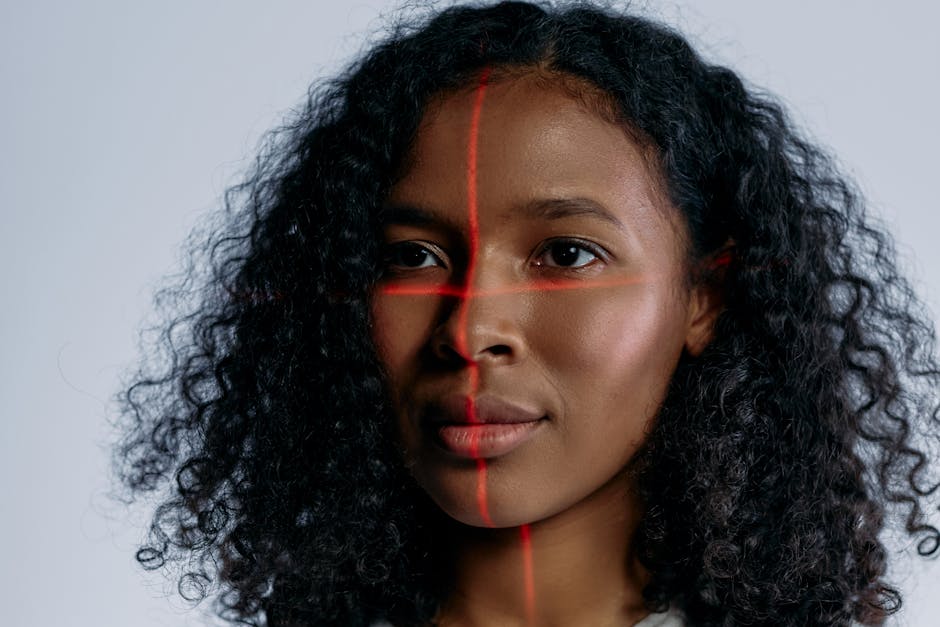

Facial recognition systems

Studies have shown that some facial recognition technologies are significantly less accurate at identifying women and people of color, leading to higher rates of misidentification and false arrests for these groups.

-

Hiring and recruitment tools

Several AI-powered hiring tools have been found to discriminate against female applicants by penalizing resumes that included words like “women’s” or attendance at all-women’s colleges, simply because historical data showed a male-dominated workforce in certain sectors.

-

Loan applications and credit scoring

AI systems used by financial institutions can inadvertently perpetuate historical lending biases, making it harder for certain demographics to access credit, even when their financial profiles are otherwise strong.

-

Criminal justice and predictive policing

Algorithms used to predict recidivism or identify high-crime areas have been criticized for disproportionately flagging individuals from minority communities, potentially leading to increased surveillance and harsher sentencing.

The profound impact of unchecked AI bias

The consequences of AI bias extend far beyond inconvenience. They can:

- Perpetuate and amplify societal inequalities: AI can hardwire existing prejudices into automated systems, making them harder to challenge.

- Erode trust in technology: When AI systems are perceived as unfair, public trust in these powerful tools diminishes.

- Lead to discriminatory outcomes: From job opportunities to healthcare access, biased AI can deny individuals fundamental rights and opportunities.

- Create legal and ethical challenges: Companies deploying biased AI face significant reputational, legal, and ethical risks.

Mitigating AI bias: steps toward fairness

Addressing AI bias requires a multi-faceted approach involving technology, policy, and human oversight:

- Diverse and representative data: Actively seek out and curate training data that accurately reflects the diversity of the real world, ensuring all groups are adequately represented.

- Bias detection and mitigation tools: Develop and use tools to identify and reduce bias in datasets and algorithms before deployment.

- Explainable AI (XAI): Build AI systems that can explain their decisions, allowing developers and users to understand why a particular outcome was reached and identify potential biases.

- Regular auditing and monitoring: Continuously test and monitor AI systems in real-world scenarios to detect emerging biases and ensure fair performance over time.

- Diverse development teams: Teams with varied backgrounds, perspectives, and experiences are more likely to identify and address potential biases in AI systems from the outset.

- Ethical AI guidelines and regulations: Establish clear ethical principles and regulatory frameworks to guide the development and deployment of AI, prioritizing fairness and accountability.

Navigating AI with ethical awareness

AI bias is a complex challenge, but it’s not insurmountable. By understanding its origins, recognizing its impact, and actively working towards mitigation, we can steer AI development towards a future that is not only intelligent but also fair and equitable. At TechDecoded, we believe that a human-friendly approach to technology means confronting its imperfections head-on. By fostering awareness and advocating for responsible AI practices, we can harness the true potential of AI to benefit everyone, without leaving anyone behind.

Leave a Comment