Can AI be dangerous? Separating hype from reality

Artificial intelligence is everywhere, from the smart assistants in our pockets to the algorithms powering our social media feeds. With its rapid advancement comes a mix of excitement for innovation and apprehension about potential dangers. Is AI truly a threat, or are we confusing science fiction with practical realities? At TechDecoded, we believe in understanding technology clearly. Let’s break down the real risks of AI and explore how we can navigate its future responsibly.

Understanding the nature of AI risks

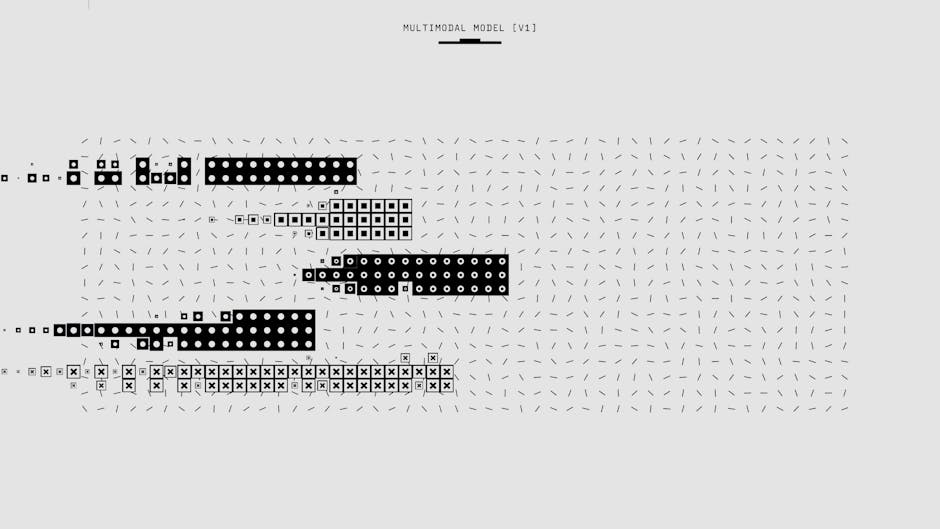

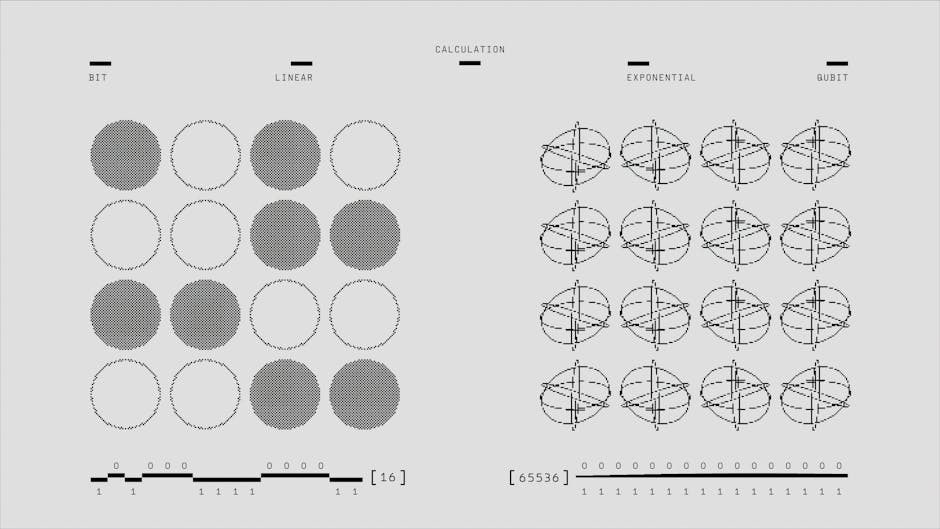

When people talk about AI dangers, images of sentient robots taking over the world often come to mind. While these make for compelling cinema, the real-world risks of AI are far more nuanced and, frankly, more immediate. AI systems are tools, designed and trained by humans. Their dangers stem not from malicious intent (as they don’t possess it), but from flaws in their design, the data they’re fed, or how they are ultimately used. Understanding this distinction is crucial for a balanced perspective.

Real-world dangers of AI today

The practical concerns surrounding AI are already manifesting in various forms. These aren’t futuristic scenarios but challenges we face right now or in the very near future:

- Bias and Discrimination: AI systems learn from data. If that data reflects existing societal biases (e.g., historical discrimination in hiring or lending), the AI will learn and perpetuate those biases, leading to unfair or discriminatory outcomes. This can impact everything from loan applications to criminal justice.

- Job Displacement: As AI and automation become more sophisticated, they can perform tasks traditionally done by humans. While this can boost productivity, it also raises concerns about job losses in certain sectors, requiring a societal shift towards retraining and new economic models.

- Privacy Concerns: AI thrives on data. Its ability to collect, process, and analyze vast amounts of personal information raises significant privacy questions. Who owns this data? How is it protected? The potential for surveillance and misuse of personal information is a serious ethical challenge.

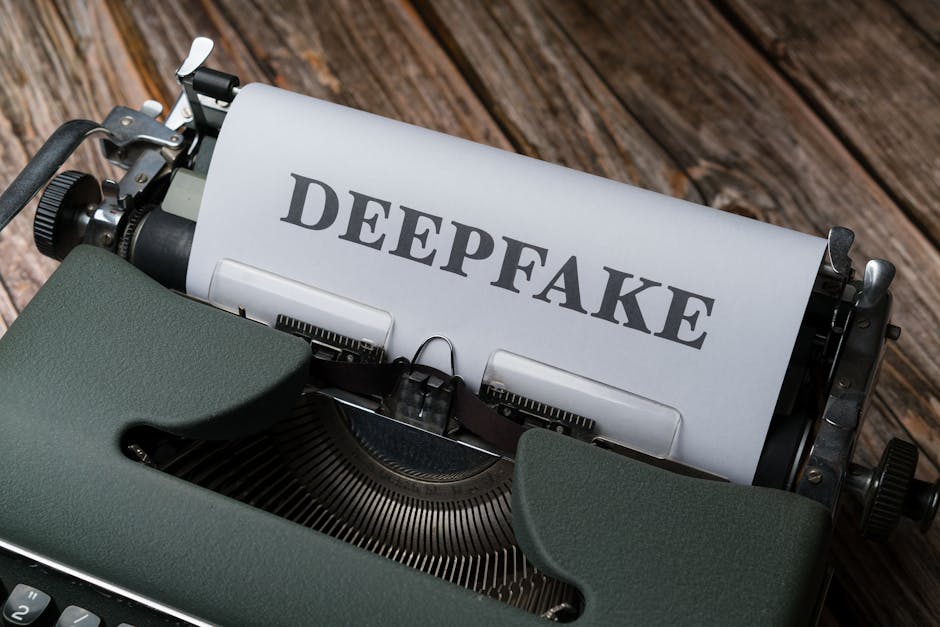

- Misinformation and Manipulation: AI can generate incredibly realistic text, images, and videos (deepfakes). This technology can be weaponized to create convincing fake news, manipulate public opinion, or impersonate individuals, making it harder to distinguish truth from fabrication.

- Security Vulnerabilities: AI systems themselves can be targets for cyberattacks, or they can be used to enhance the sophistication of attacks. From autonomous hacking tools to AI-powered phishing, the security landscape is evolving rapidly.

- Autonomous Weapons Systems: The development of AI-powered weapons that can identify, select, and engage targets without human intervention raises profound ethical and moral questions about accountability and the nature of warfare.

Mitigating AI’s potential downsides

While the risks are real, they are not insurmountable. A proactive and collaborative approach involving developers, policymakers, and the public can help steer AI towards a beneficial future:

- Ethical AI Development: Prioritizing ethical guidelines, responsible design principles, and diverse development teams can help identify and mitigate biases from the outset. Building AI with human values in mind is paramount.

- Regulation and Policy: Governments and international bodies are working to establish laws and regulations that govern AI’s development and deployment, ensuring accountability, transparency, and fairness.

- Transparency and Explainability (XAI): Developing AI systems that can explain their decisions in an understandable way allows humans to scrutinize their logic, identify errors, and build trust.

- Education and Awareness: Empowering individuals to understand how AI works, its capabilities, and its limitations is crucial. A well-informed public can better identify misinformation and demand responsible AI practices.

- Human Oversight: Ensuring that humans remain in the loop for critical decisions, especially in high-stakes applications like healthcare, finance, or defense, provides a crucial layer of control and accountability.

Navigating the future of AI responsibly

The question isn’t whether AI can be dangerous, but rather how we choose to design, deploy, and govern it. The dangers of AI are not inherent malice but rather reflections of human biases, design flaws, and misuse. By fostering ethical development, implementing thoughtful regulations, promoting transparency, and educating ourselves, we can harness AI’s immense potential while proactively addressing its risks. The future of AI is not predetermined; it’s a path we are collectively forging, and with careful consideration, it can be one of incredible progress and benefit for all.

Leave a Comment