Unpacking the AI Model Dilemma: Bias and Variance

Ever wondered why some AI models perform brilliantly on data they’ve seen before, but stumble when faced with new information? Or why others seem to miss the mark entirely, even on familiar data? The answer often lies in two fundamental concepts: bias and variance. Understanding these isn’t just academic; it’s crucial for building reliable, effective AI systems that truly deliver on their promise. At TechDecoded, we believe in demystifying complex tech, so let’s break down bias and variance in a practical, human-friendly way.

What is Bias in AI? The Underfitting Problem

Imagine you’re trying to teach a child to identify different animals. If you only show them pictures of dogs and tell them ‘this is an animal,’ they might develop a very simplistic understanding. When they see a cat, a bird, or a fish, they might still call it ‘an animal’ but fail to distinguish its unique characteristics. This is akin to high bias in an AI model.

In machine learning, bias refers to the simplifying assumptions made by a model to make the target function easier to learn. A model with high bias makes too many assumptions, leading it to miss the relevant relationships between features and the target output. It’s too rigid and fails to capture the underlying patterns in the data. This often results in what we call underfitting.

- Characteristics of high bias:

- The model is too simple for the data.

- It performs poorly on both training data and new, unseen data.

- It consistently makes systematic errors.

Think of it as trying to fit a complex, curvy path with a single straight line. It might capture the general direction, but it will miss all the nuances.

What is Variance in AI? The Overfitting Problem

Now, let’s consider another child learning about animals. This child is shown hundreds of pictures of dogs, memorizing every single detail – the specific fur patterns, the exact shape of a particular dog’s ears, even the background of the photos. When shown a new dog that looks slightly different, or a dog in a new setting, they might struggle to identify it as a dog because it doesn’t perfectly match their memorized examples. This is similar to high variance.

Variance refers to the model’s sensitivity to small fluctuations or noise in the training data. A model with high variance essentially ‘memorizes’ the training data, including its noise and irrelevant details, rather than learning the general underlying patterns. While it performs exceptionally well on the training data, it struggles to generalize to new, unseen data. This phenomenon is known as overfitting.

- Characteristics of high variance:

- The model is too complex for the data.

- It performs very well on training data but poorly on new data.

- It’s highly sensitive to the specific training examples.

Imagine trying to perfectly trace every single bump and dip of that complex, curvy path. You’d end up with a very wiggly line that fits the training path perfectly, but if the path shifts even slightly, your line would be way off.

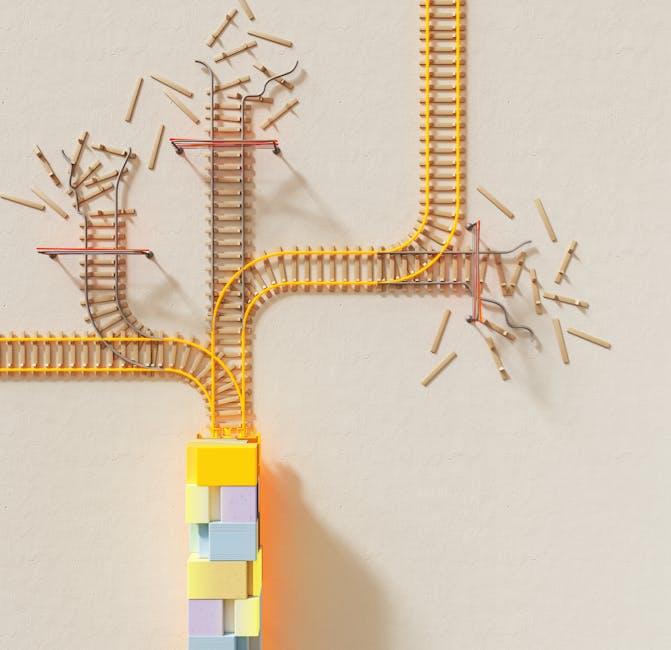

The Bias-Variance Trade-Off: Finding the Goldilocks Zone

Here’s the tricky part: bias and variance are often inversely related. This is known as the bias-variance trade-off. Generally:

- Increasing a model’s complexity tends to decrease bias but increase variance.

- Decreasing a model’s complexity tends to increase bias but decrease variance.

Our goal isn’t to eliminate one or the other entirely, but to find the optimal balance – the ‘Goldilocks zone’ – where both bias and variance are acceptably low, leading to the best possible predictive performance on new data. This sweet spot minimizes the total error of the model.

Think of it as a balancing act. Too simple, and your model can’t learn enough (high bias). Too complex, and your model learns too much, including the noise (high variance). The ideal model is complex enough to capture the true patterns but simple enough to ignore the noise.

Practical Strategies to Manage Bias and Variance

Understanding bias and variance is the first step; the next is knowing how to address them in your AI projects.

Reducing Bias (Addressing Underfitting):

- Increase Model Complexity: Use more sophisticated algorithms or add more layers/neurons to neural networks.

- Add More Features: Provide the model with more relevant information about the problem.

- Feature Engineering: Create new features from existing ones that might better represent the underlying patterns.

- Decrease Regularization: If using regularization, reduce its strength to allow the model more flexibility.

Reducing Variance (Addressing Overfitting):

- More Training Data: The more diverse data a model sees, the better it can generalize.

- Feature Selection/Reduction: Remove irrelevant or redundant features that might be introducing noise.

- Regularization: Techniques like L1 (Lasso) and L2 (Ridge) regularization penalize overly complex models, encouraging simpler solutions.

- Cross-Validation: Use techniques like k-fold cross-validation to get a more robust estimate of model performance on unseen data and to tune hyperparameters effectively.

- Ensemble Methods: Combine multiple models (e.g., Random Forests, Gradient Boosting) to reduce variance. Each individual model might have high variance, but their combined predictions are more stable.

Navigating the Path to Better AI Models

The journey to building robust AI models is iterative. It involves continuous experimentation, monitoring, and refinement. By understanding bias and variance, you gain powerful diagnostic tools. When your model isn’t performing as expected, you can now ask: Is it underfitting (high bias) or overfitting (high variance)? This insight guides your next steps, whether it’s gathering more data, simplifying your model, or exploring advanced techniques.

Embrace the bias-variance trade-off as a fundamental principle in your AI development. It’s not about achieving zero bias or zero variance, but about finding that optimal balance that allows your models to learn effectively from data and generalize reliably to the real world. This practical understanding is what truly empowers you to build smarter, more dependable AI systems.

Leave a Comment