Understanding AI’s core dilemma

In the rapidly evolving world of artificial intelligence, two qualities often stand in tension: accuracy and interpretability. On one hand, we push for AI models that deliver unparalleled precision, capable of tasks once thought impossible. On the other, we increasingly demand to understand how these models arrive at their conclusions. This isn’t just an academic debate; it’s a fundamental challenge shaping the future of practical, human-friendly AI.

At TechDecoded, we believe in demystifying complex tech. Today, we’re diving into this crucial trade-off, exploring why it exists, its implications, and how we can navigate it to build more effective and trustworthy AI systems.

The relentless pursuit of accuracy

For years, the primary goal in AI development has been to achieve the highest possible accuracy. Breakthroughs in deep learning, neural networks, and advanced algorithms have led to models that can outperform humans in specific tasks, from recognizing faces to translating languages and even diagnosing diseases. The more data we feed them and the more complex their architectures become, the better they often perform.

- Image Recognition: Modern convolutional neural networks can identify objects, people, and scenes with incredible precision, powering everything from self-driving cars to medical imaging analysis.

- Natural Language Processing: Large language models generate human-like text, translate languages, and summarize documents, often achieving near-human fluency.

- Predictive Analytics: In finance, marketing, and logistics, highly accurate models predict trends and behaviors, optimizing operations and maximizing efficiency.

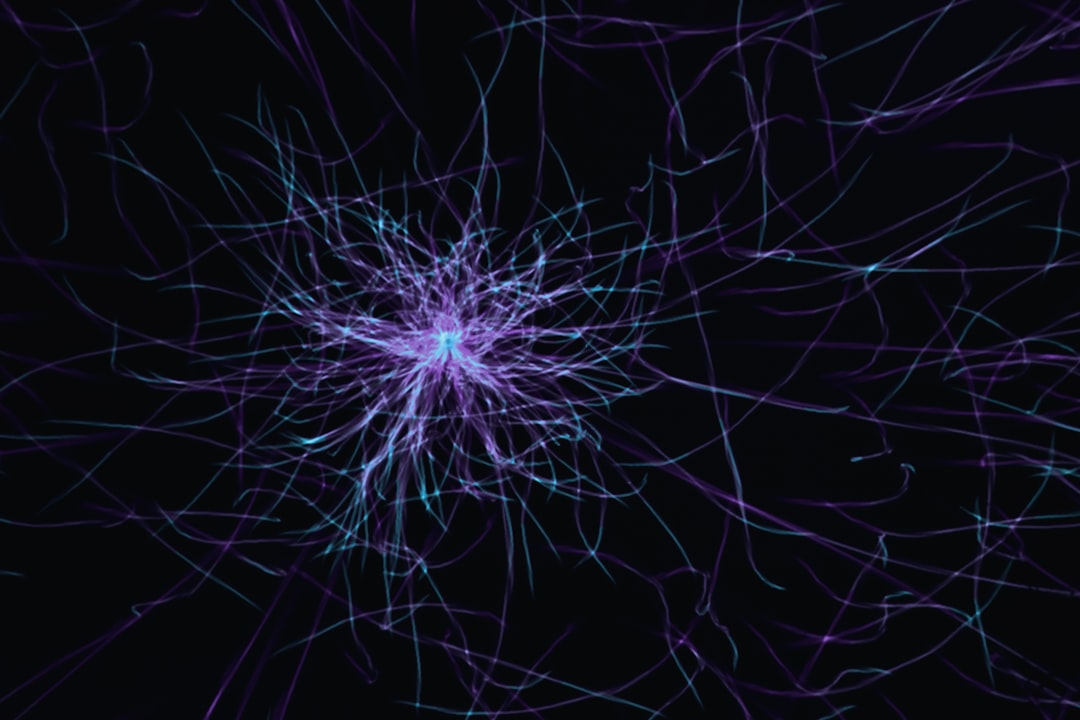

However, this pursuit of accuracy often comes at a cost: these powerful models frequently operate as ‘black boxes.’ Their internal workings are so intricate that even their creators struggle to fully explain their decision-making process.

Why interpretability is non-negotiable

While accuracy is vital, there are many scenarios where simply getting the right answer isn’t enough. We need to understand the ‘why’ behind an AI’s decision. This is where interpretability comes into play – the ability to explain or present the workings of an AI model in understandable terms.

- Building Trust: If an AI recommends a medical treatment or approves a loan, users and stakeholders need to trust that the decision is sound and fair. Understanding the reasoning fosters this trust.

- Ensuring Fairness and Ethics: Black-box models can inadvertently perpetuate or even amplify biases present in their training data. Interpretability helps us identify and mitigate these biases, ensuring equitable outcomes.

- Debugging and Improvement: When an AI makes a mistake, an interpretable model allows developers to pinpoint the exact reason, facilitating faster debugging and more targeted improvements.

- Regulatory Compliance: Industries like finance and healthcare are subject to strict regulations (e.g., GDPR’s ‘right to explanation’). Interpretable AI is crucial for meeting these legal and ethical obligations.

Imagine an AI misdiagnosing a patient or unfairly denying a credit application. Without interpretability, understanding the error and preventing future occurrences becomes incredibly difficult, if not impossible.

The inherent trade-off: A balancing act

The core of the dilemma lies in the nature of the models themselves. Generally, simpler models (like linear regression or decision trees) are highly interpretable. You can often trace their decision path step-by-step. However, these models often lack the complexity to capture nuanced patterns in large, high-dimensional datasets, leading to lower accuracy for complex tasks.

Conversely, highly accurate models (like deep neural networks or ensemble methods) achieve their performance by learning incredibly complex, non-linear relationships within data. This complexity, while powerful, makes their internal logic opaque and difficult to unravel. It’s like trying to understand a symphony by analyzing every single note played by every instrument simultaneously – the sheer volume of interactions makes it overwhelming.

This isn’t a universal law, and research is constantly pushing boundaries, but for now, it’s a widely accepted practical reality: increasing one often means compromising the other. The challenge is to find the optimal point on this spectrum for each specific application.

Navigating the dilemma: Strategies for practical AI

So, how do we navigate this fundamental trade-off? It requires a thoughtful, context-aware approach, often employing a combination of techniques:

- Explainable AI (XAI) Techniques: This is a rapidly growing field dedicated to making black-box models more understandable. Techniques like LIME (Local Interpretable Model-agnostic Explanations) and SHAP (SHapley Additive exPlanations) provide insights into which features most influenced a specific prediction, even for complex models.

- Hybrid Models: Sometimes, a multi-model approach works best. A highly accurate black-box model might make an initial prediction, which is then validated or explained by a simpler, more interpretable model focusing on critical factors.

- Domain-Specific Interpretability: The level of interpretability required varies greatly. In high-stakes domains like autonomous driving or medical diagnosis, interpretability is paramount. For less critical applications, like recommending a movie, a highly accurate but less interpretable model might be perfectly acceptable.

- Feature Engineering and Selection: By carefully selecting and engineering features that are inherently meaningful and less correlated, we can build models that are both more accurate and easier to interpret from the outset.

The key is to move beyond simply chasing the highest accuracy metric and instead consider the broader impact and requirements of the AI system in its real-world context.

Building trustworthy AI for tomorrow

The trade-off between accuracy and interpretability isn’t a barrier to AI progress; it’s a critical design consideration. As AI becomes more integrated into our daily lives, the demand for transparency and accountability will only grow. By consciously addressing this dilemma, leveraging emerging XAI techniques, and adopting a human-centric approach to AI development, we can build systems that are not only powerful and precise but also understandable, fair, and ultimately, more trustworthy.

The future of AI isn’t just about making machines smarter; it’s about making them better partners for humanity. This means embracing the complexity of the accuracy-interpretability trade-off and actively working to find the right balance for every challenge we face.

Leave a Comment