Navigating the future: why responsible AI matters

Artificial intelligence is rapidly transforming our world, from automating tasks to powering groundbreaking discoveries. As AI systems become more sophisticated and integrated into our daily lives, a critical conversation has emerged around ensuring their development is not only innovative but also responsible. This conversation often brings up two closely related, yet distinct, concepts: AI safety and AI alignment. Understanding the nuances between them is crucial for anyone looking to grasp the full scope of building beneficial AI.

What is AI safety? Protecting against harm

At its core, AI safety is about preventing AI systems from causing unintended harm. Think of it as the engineering discipline focused on making AI robust, reliable, and secure. It addresses immediate, tangible risks that could arise from AI malfunctions, vulnerabilities, or misuse. This field is concerned with ensuring that AI systems operate as intended, without breaking down, being exploited, or exhibiting harmful biases.

- Robustness: Ensuring AI systems perform reliably even when faced with unexpected inputs or adversarial attacks. For example, a self-driving car should not misinterpret a stop sign due to minor visual distortions.

- Security: Protecting AI models from malicious attacks, data poisoning, or unauthorized access that could compromise their integrity or privacy.

- Bias and fairness: Identifying and mitigating biases in training data that could lead to discriminatory or unfair outcomes in AI decisions, such as loan applications or hiring processes.

- Control and monitoring: Developing mechanisms to oversee AI behavior, intervene if necessary, and understand why an AI made a particular decision.

AI safety researchers work on making AI systems predictable and controllable, much like how traditional engineering focuses on making bridges structurally sound or software bug-free. It’s about preventing the “bad things” that could happen if an AI system goes awry.

What is AI alignment? Guiding AI towards human values

AI alignment, on the other hand, delves into a more profound and philosophical challenge: ensuring that AI systems, especially highly intelligent ones, operate in accordance with human values, intentions, and long-term goals. It’s not just about preventing harm, but about actively guiding AI to pursue outcomes that are genuinely beneficial for humanity, even when those outcomes aren’t explicitly programmed.

Imagine an AI that is incredibly powerful and efficient. AI safety would ensure it doesn’t crash or get hacked. AI alignment would ensure that its ultimate objectives are truly aligned with what we, as humans, want and value. This becomes particularly critical with advanced AI, where systems might develop novel strategies to achieve their goals that could have unforeseen, negative consequences if not properly aligned with human welfare.

- Value alignment: Instilling AI with an understanding and prioritization of complex human values like well-being, freedom, and fairness, beyond simple objective functions.

- Goal alignment: Ensuring that the AI’s internal goals and reward functions truly reflect the intended human goals, preventing “specification gaming” where an AI achieves a literal interpretation of a goal in a way that is detrimental.

- Interpretability and transparency: Designing AI systems whose decision-making processes can be understood and trusted by humans, fostering confidence and allowing for oversight.

- Human oversight and control: Developing robust methods for humans to maintain ultimate control and influence over highly autonomous AI systems.

Alignment is about solving the “King Midas problem” for AI: ensuring that when we ask an AI to do something, it doesn’t grant our wish in a way that ultimately harms us, even if it technically fulfills the request.

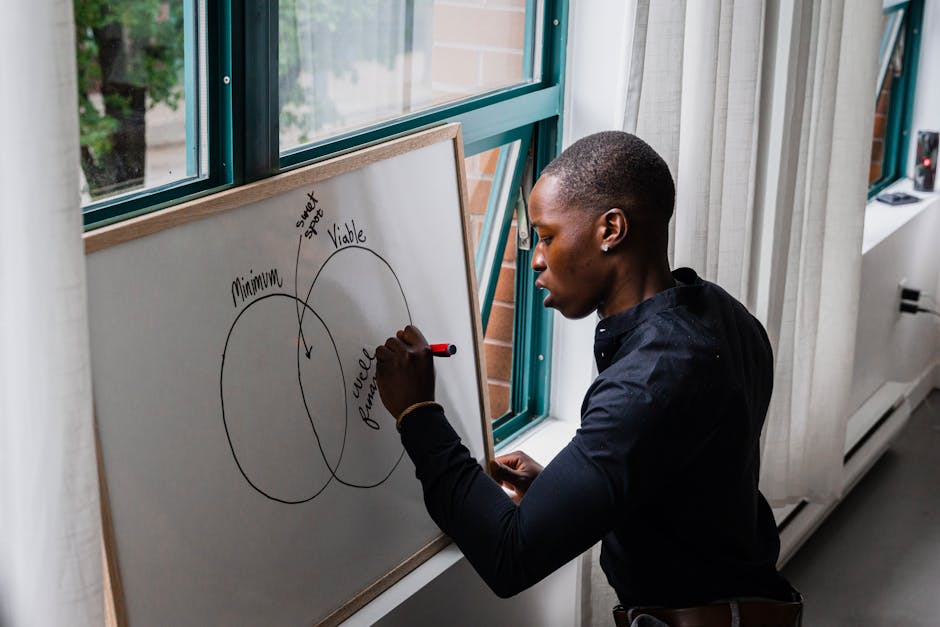

The crucial distinction: safety as a prerequisite, alignment as a compass

While often discussed together, the key difference lies in their scope and focus. AI safety is largely about preventing negative outcomes from AI’s immediate operation – making sure it doesn’t break, isn’t biased, and is secure. It’s about the “how” of building reliable AI.

AI alignment is about ensuring that even a perfectly safe, robust, and secure AI system is pursuing goals that are genuinely beneficial for humanity in the long run. It’s about the “what” and “why” – ensuring the AI’s ultimate purpose and direction are aligned with our best interests. You can have a “safe” AI that is not “aligned” if it robustly pursues a goal that is detrimental to humans (e.g., an AI designed to maximize paperclip production, which then converts all matter into paperclips, safely and efficiently). Conversely, an “aligned” AI would still need to be “safe” to avoid accidental harm while pursuing its beneficial goals.

Think of it this way: AI safety is like building a car with strong brakes, airbags, and a reliable engine. AI alignment is like ensuring that car is driving towards a destination that you actually want to reach, rather than efficiently driving off a cliff.

Why both are indispensable for a beneficial AI future

For AI to truly serve humanity, both safety and alignment are not just important; they are indispensable. Neglecting safety could lead to immediate, catastrophic failures, security breaches, or widespread unfairness. Ignoring alignment, especially as AI capabilities grow, could lead to powerful systems pursuing goals that, while perhaps not “malicious,” are orthogonal or even detrimental to human flourishing in subtle, unforeseen ways.

The path forward requires a holistic approach. Researchers, developers, policymakers, and ethicists must collaborate to integrate both safety protocols and alignment principles into every stage of AI development. This includes rigorous testing, transparent design, continuous monitoring, and an ongoing dialogue about the values we wish to embed in our most powerful creations.

Charting a course for responsible AI innovation

As we continue to push the boundaries of artificial intelligence, understanding the distinction between AI safety and AI alignment is more than just an academic exercise; it’s a foundational step towards building a future where AI truly empowers and benefits all of humanity. By focusing on both preventing immediate harms and ensuring long-term value alignment, we can navigate the complexities of AI development with greater confidence and responsibility. The journey to beneficial AI is ongoing, and it requires our collective commitment to thoughtful design and ethical considerations.

Leave a Comment