Imagine you’re applying for a loan, a job, or even just trying to get a medical diagnosis. You expect the system to be fair, objective, and treat everyone equally. But what if the artificial intelligence making those decisions isn’t? What if, without anyone even realizing it, the AI is making choices that are inherently unfair, simply because of the information it learned from?

This isn’t a sci-fi movie plot; it’s a very real challenge facing our increasingly AI-driven world. When AI systems are trained on data that carries existing human biases, they don’t just learn facts – they learn prejudices. And the consequences can ripple through our lives in surprising and often harmful ways.

What is Biased Data, Anyway?

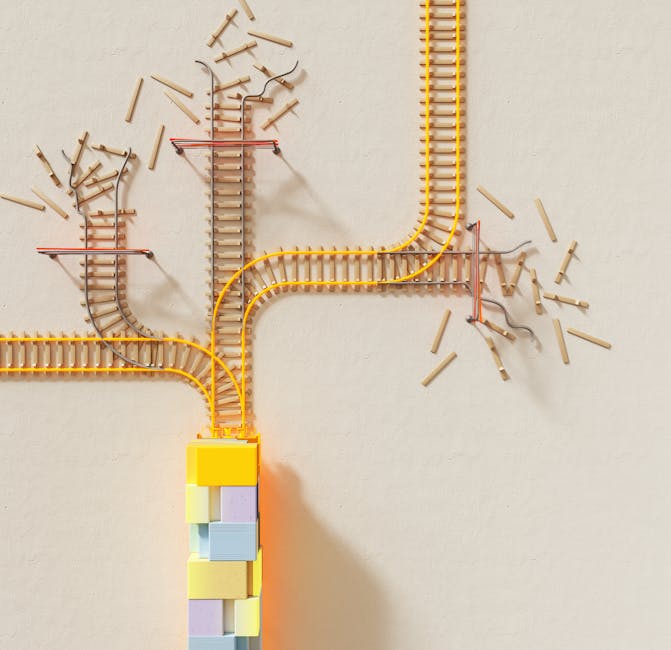

So, what exactly does “biased data” mean? Think of it this way: AI learns by looking at vast amounts of information, finding patterns, and making predictions based on those patterns. If the historical data it’s fed reflects past inequalities – for example, if a certain demographic was historically underrepresented in leadership roles, or if medical research predominantly focused on one group – the AI will simply assume those patterns are the “norm.” It doesn’t understand fairness; it just understands correlation.

This isn’t about AI intentionally being “mean.” It’s about AI faithfully reproducing and even amplifying the biases present in the data it was given. It’s the digital equivalent of “garbage in, garbage out.”

Real-World Impact: Where Bias Shows Up

One of the most talked-about areas where this bias shows up is in hiring. Many companies now use AI tools to screen resumes or even conduct initial interviews. If the AI was trained on data from a company where, historically, most successful candidates for a certain role were men, it might inadvertently learn to favor male applicants, even if it’s not explicitly programmed to do so. This can lead to qualified women or other underrepresented groups being unfairly overlooked, perpetuating old inequalities.

It’s not just jobs. In healthcare, AI systems designed to diagnose diseases or recommend treatments can also exhibit bias. If the data used to train a diagnostic AI largely came from one ethnic group, it might perform less accurately when diagnosing patients from other backgrounds. This could lead to delayed or incorrect diagnoses, with serious health implications for those affected.

Even in the justice system, AI tools are sometimes used to predict the likelihood of a defendant re-offending. If the training data reflects historical biases in policing or sentencing against certain communities, the AI might unfairly assign higher risk scores to individuals from those communities, potentially influencing bail decisions or parole outcomes. This can deepen existing social divides and undermine trust in the system.

The impact of biased AI isn’t always about grand societal issues; it can touch your daily life too. Have you ever noticed an online ad that felt completely off-base, or a recommendation system that seemed to miss the mark for you? While not always due to deep-seated bias, sometimes these systems reflect skewed data about user preferences or demographics, leading to less relevant or even frustrating experiences.

Moving Forward: Building Fairer AI

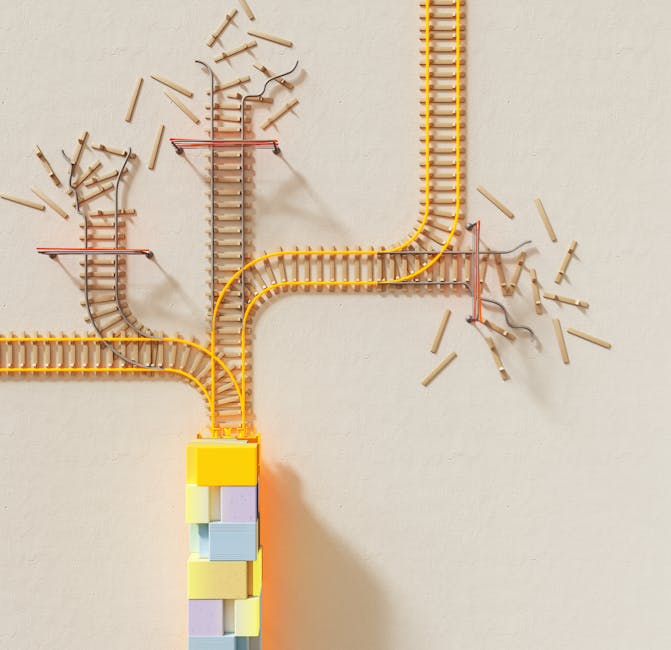

Understanding this challenge is the first step towards addressing it. It highlights why the people developing AI, and the data they use, matter so much. It’s not enough to just build powerful algorithms; we also need to ensure they are built on a foundation of fairness and equity.

So, what can be done? Addressing biased data requires a multi-faceted approach. It involves:

- Diverse Data Collection: Actively seeking out and including data from a wide range of demographics and situations to ensure AI learns from a more complete picture of the world.

- Careful Data Auditing: Regularly checking and cleaning datasets for existing biases before they are fed into AI systems.

- Ethical AI Design: Building AI with fairness metrics in mind, and developing ways to detect and mitigate bias during the training process.

- Human Oversight: Keeping humans in the loop to review AI decisions, especially in critical areas like healthcare, finance, and justice, to catch and correct errors or biases.

The goal isn’t to stop AI development, but to ensure that as AI becomes more integrated into our lives, it serves everyone fairly and equitably. By understanding the hidden costs of biased data, we can push for more responsible AI that truly benefits all of humanity, rather than simply reflecting our past imperfections.

Leave a Comment