{

“title”: “Avoid common AI mistakes: A practical guide”,

“meta”: “Learn how to sidestep common pitfalls when using AI tools. TechDecoded helps you understand AI’s limitations, craft better prompts, and ensure responsible, effective use.”,

“content_html”: “

Introduction: Navigating the AI landscape

Artificial intelligence is no longer a futuristic concept; it’s a powerful tool integrated into our daily lives and workflows. From generating content to analyzing complex data, AI promises unprecedented efficiency and innovation. However, like any powerful tool, AI comes with its own set of challenges and potential pitfalls. Many users, eager to harness its capabilities, often fall into common traps that lead to frustration, inaccurate results, or even security risks.

At TechDecoded, we believe in making technology accessible and practical. This guide will walk you through the most frequent mistakes people make when using AI and, more importantly, how to avoid them, ensuring you leverage AI effectively and responsibly.

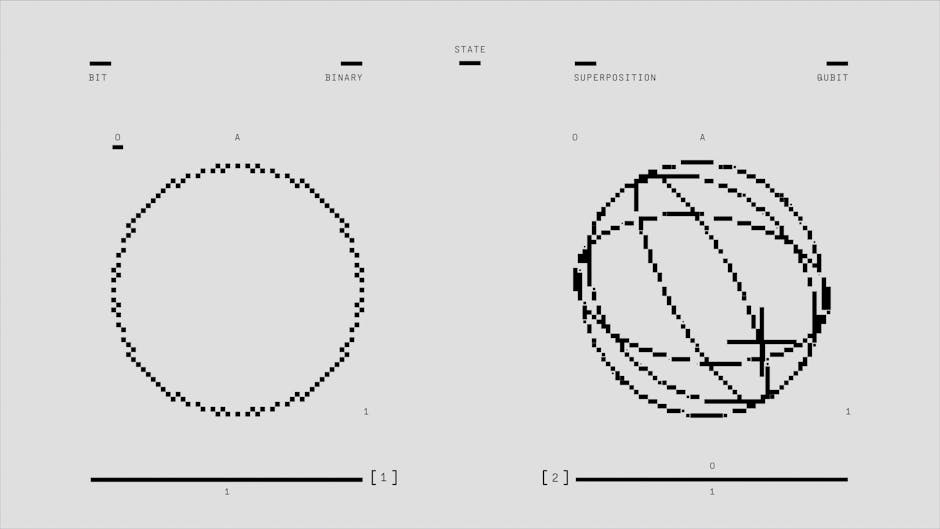

Understanding AI’s true nature and limitations

One of the biggest mistakes is treating AI as a sentient being or a magic bullet. AI is a sophisticated algorithm designed to process data and perform tasks based on its training. It doesn’t ‘think’ or ‘understand’ in the human sense.

- Overestimating AI capabilities: Expecting AI to solve complex, nuanced problems without human input or to possess general knowledge beyond its training data. AI excels at specific tasks, not universal problem-solving.

- Expecting human-like understanding: AI processes patterns, not meaning. It can generate coherent text, but it doesn’t comprehend the underlying concepts or implications in the way a human does.

How to avoid: Approach AI as a powerful assistant or a specialized tool. Understand its specific strengths (e.g., pattern recognition, data processing, content generation) and its inherent weaknesses (e.g., lack of common sense, inability to truly innovate, dependence on training data).

Crafting effective prompts for better results

The quality of AI output is directly proportional to the quality of your input. Vague or poorly constructed prompts are a leading cause of unsatisfactory results, often leading users to believe the AI itself is flawed.

- Vague or ambiguous prompts: Asking open-ended questions without sufficient context or specific instructions. For example, “Write about marketing” is too broad; “Write a 200-word blog post about the benefits of email marketing for small businesses, focusing on ROI and ease of implementation” is much better.

- Not iterating on prompts: Treating the first prompt as the final one. AI often requires refinement. If the initial output isn’t perfect, don’t give up; refine your prompt, add constraints, or provide examples.

How to avoid: Be specific, provide context, define the desired format, tone, and length. Use examples if possible. Think of prompt engineering as a conversation where you guide the AI towards the desired outcome.

The critical need for human oversight and verification

AI models, especially large language models, are known to “hallucinate” – generating plausible-sounding but factually incorrect information. Blindly trusting AI output can lead to significant errors and misinformation.

- Blindly trusting AI output: Using AI-generated content or data without fact-checking or cross-referencing with reliable sources. This is particularly dangerous in fields requiring high accuracy, like medical, legal, or financial advice.

- Neglecting human oversight: Automating entire processes with AI without any human review points. AI should augment human capabilities, not replace critical thinking and verification.

How to avoid: Always verify critical information generated by AI. Treat AI output as a first draft or a starting point, not a final product. Implement a human review stage for all important AI-assisted tasks.

Protecting your data and privacy with AI tools

When you interact with AI tools, especially cloud-based ones, you are often sharing data. Understanding how this data is used and protected is crucial to maintaining privacy and security.

- Inputting sensitive information: Sharing confidential company data, personal identifiable information (PII), or proprietary secrets into public AI models without understanding their data retention and usage policies.

- Not understanding data usage policies: Assuming your inputs are private or not used for training. Many free AI services use user inputs to further train their models, potentially exposing your data.

How to avoid: Read the terms of service and privacy policies of any AI tool you use. Avoid inputting sensitive or confidential information into public AI models. Consider using enterprise-grade AI solutions with robust data privacy agreements for business-critical tasks.

Navigating ethical considerations and inherent biases

AI models are trained on vast datasets, which often reflect existing societal biases. If the training data is biased, the AI’s output will likely be biased too, leading to unfair or discriminatory results.

- Ignoring potential biases: Using AI for decision-making (e.g., hiring, loan applications) without understanding and mitigating its inherent biases, which can perpetuate or amplify discrimination.

- Using AI for unethical purposes: Employing AI to generate misinformation, deepfakes, or engage in other harmful activities.

How to avoid: Be aware that AI can carry biases. Critically evaluate AI outputs for fairness and equity, especially when used in sensitive contexts. Use AI responsibly and ethically, aligning its use with human values and societal good.

Integrating AI as an assistant, not a replacement

The most effective use of AI is often when it acts as an augmentation to human intelligence, not a complete replacement. Trying to automate everything can lead to a loss of human touch, critical oversight, and adaptability.

- Trying to automate everything: Believing AI can fully automate complex jobs or creative tasks without human intervention. This often leads to generic, uninspired, or error-prone outcomes.

- Not training users on AI tools: Rolling out AI tools without proper training for your team on how to use them effectively, understand their limitations, and integrate them into existing workflows.

How to avoid: Focus on using AI to enhance human productivity and creativity. Identify specific tasks where AI can assist (e.g., drafting, summarizing, data analysis) and integrate it thoughtfully into existing human-led processes. Invest in training your team to become ‘AI-literate’.

A smarter path to leveraging AI’s power

AI is a transformative technology, but its true potential is unlocked when approached with a clear understanding of its capabilities and limitations. By avoiding these common mistakes – from overestimating its intelligence to neglecting human oversight and data privacy – you can move beyond mere experimentation to truly harness AI as a powerful ally.

Embrace AI as a tool for augmentation, not replacement. Be curious, be critical, and always prioritize responsible and ethical use. With this mindful approach, you’ll not only avoid common pitfalls but also pave the way for innovative and impactful applications of artificial intelligence in your personal and professional life.

“,

“thumbnail_keyword”: “avoiding AI mistakes”,

“image_keywords”: [

“AI landscape navigation”,

“AI limitations diagram”,

“prompt engineering example”,

“fact-checking process”,

“data privacy padlock”,

“ethical AI scales”,

“human AI collaboration”

]

}

Leave a Comment