Understanding the AI training marathon

Artificial intelligence is transforming industries, but behind every powerful AI lies a rigorous training process. One of the most common questions people ask is: “How long does it actually take to train an AI model?” The answer, much like AI itself, is complex and depends on a multitude of factors. It’s not a simple ‘plug-and-play’ scenario; instead, it’s a marathon that can range from minutes to months. At TechDecoded, we’re here to demystify this process, breaking down the key elements that influence AI training duration.

What exactly is AI model training?

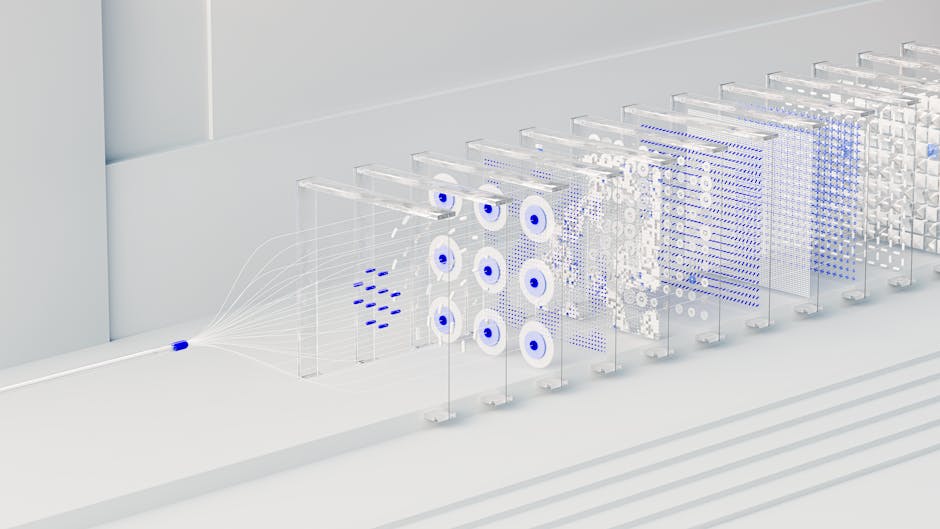

Before we dive into the ‘how long,’ let’s quickly define ‘what.’ AI model training is the process of feeding an algorithm vast amounts of data, allowing it to learn patterns, make predictions, or perform specific tasks. Think of it like teaching a child: you show them many examples, correct their mistakes, and over time, they learn to recognize objects or understand concepts. For an AI, this involves adjusting internal parameters (weights and biases) based on the training data to minimize errors and improve performance.

Key factors influencing AI training time

Several critical elements dictate how long an AI model will spend in training. Understanding these can help set realistic expectations for AI development projects.

-

The volume and quality of data

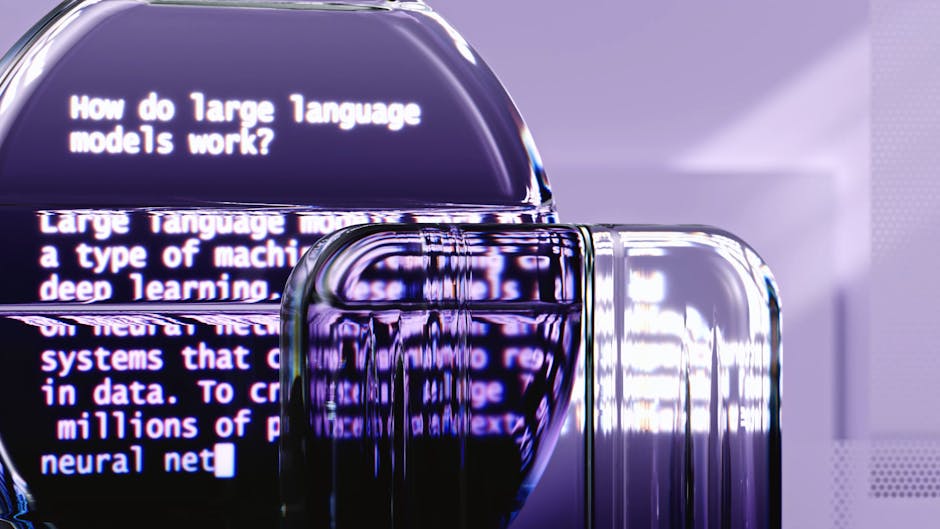

More data generally means more learning, but also more processing time. A small dataset for a simple task might train quickly, while a massive dataset (terabytes or petabytes) for a complex task like training a large language model (LLM) will take significantly longer. Data quality also plays a role; clean, well-labeled data can speed up convergence, whereas noisy data might require more iterations or pre-processing time.

-

Model complexity and architecture

The more intricate the AI model, the longer it takes to train. A simple linear regression model might train in seconds, but a deep neural network with millions or even billions of parameters (like those used in advanced image recognition or natural language processing) demands immense computational effort and time. The architecture—how layers are structured and connected—also impacts training efficiency.

-

Computational resources available

This is perhaps the most direct factor. Training an AI model is computationally intensive. The type and quantity of hardware you use make a huge difference:

- CPUs (Central Processing Units): Slower for parallel processing, suitable for smaller models or initial development.

- GPUs (Graphics Processing Units): Excellent for parallel computations, significantly accelerating deep learning training. Most modern AI training relies heavily on GPUs.

- TPUs (Tensor Processing Units): Custom-built by Google for neural network workloads, offering even greater speed for specific AI tasks, especially within Google Cloud environments.

The more powerful and numerous your GPUs or TPUs, the faster your model can potentially train. Distributed training across multiple machines can also drastically cut down time.

-

Algorithm choice and optimization

Different AI algorithms have varying training characteristics. Some converge faster than others. Furthermore, the choice of optimizer (e.g., Adam, SGD, RMSprop) and its configuration (learning rate, momentum) can profoundly impact how quickly a model learns and reaches optimal performance. Poor optimization can lead to slow training or even prevent the model from learning effectively.

-

Desired accuracy and performance

Training isn’t just about running the code; it’s about achieving a desired level of accuracy or performance. Reaching 80% accuracy might be quick, but squeezing out that last 5-10% to hit 85-90% can often take disproportionately longer, requiring more epochs (passes through the entire dataset) and fine-tuning.

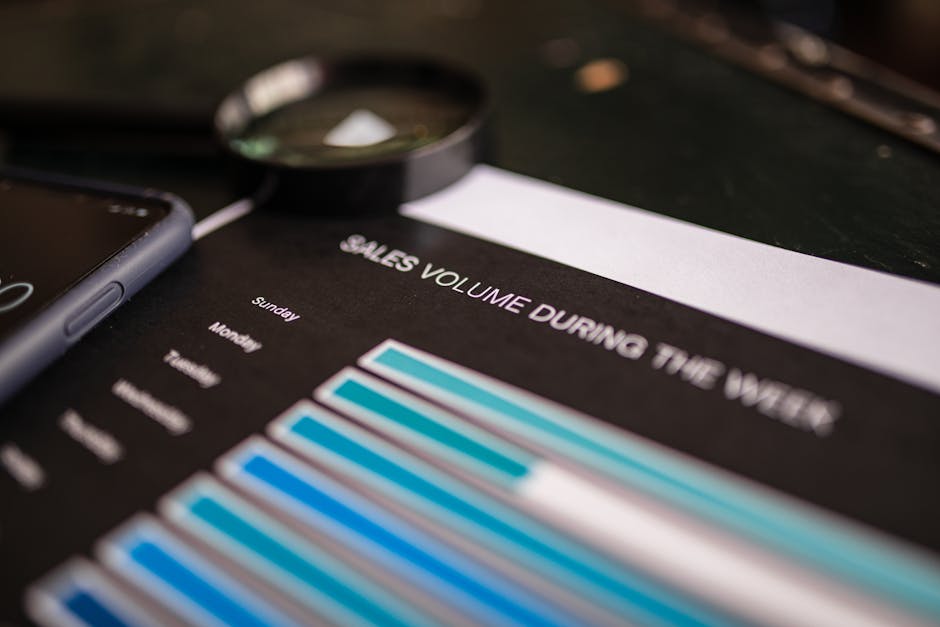

Real-world training time examples (a broad spectrum)

To give you a clearer picture, here’s a general idea of training times for different types of AI models:

-

Simple Machine Learning Models (e.g., Linear Regression, Decision Trees): Minutes to a few hours on a standard CPU, depending on data size.

-

Image Classification (e.g., identifying cats vs. dogs): A few hours to a few days on a single GPU for a moderately sized dataset and a pre-trained model (transfer learning). Training from scratch can take longer.

-

Natural Language Processing (e.g., sentiment analysis, basic chatbots): Several hours to a week on multiple GPUs, especially for larger transformer models.

-

Large Language Models (LLMs) & Advanced Generative AI: Weeks to many months, often requiring hundreds or thousands of GPUs/TPUs running in parallel. Models like GPT-3 or LLaMA took immense computational resources and time to train.

Strategies to accelerate AI training

Developers aren’t just passively waiting; they employ various strategies to speed up the training process:

- Transfer Learning: Reusing a pre-trained model as a starting point for a new, related task. This can drastically cut down training time as the model already understands basic features.

- Distributed Training: Splitting the training workload across multiple GPUs, machines, or even data centers.

- Cloud Computing: Leveraging scalable cloud infrastructure (AWS, Google Cloud, Azure) to access powerful, on-demand computational resources without large upfront hardware investments.

- Optimized Frameworks & Libraries: Using highly optimized deep learning frameworks like TensorFlow or PyTorch, which are designed for efficiency.

- Data Augmentation: Creating new training examples from existing data (e.g., rotating images) to effectively increase dataset size without collecting new data.

Navigating the AI training landscape effectively

The journey of training an AI model is rarely linear. It’s an iterative process involving experimentation, debugging, and fine-tuning. While the technical factors like data, model complexity, and hardware are paramount, the human element—the expertise of data scientists and machine learning engineers—is equally crucial in optimizing this process. They make the critical decisions that can shave days or weeks off training times, ensuring that the AI models we build are not only powerful but also developed efficiently. As AI continues to evolve, so too will the methods and technologies we use to train these intelligent systems, making the ‘how long’ question an ever-evolving challenge and opportunity.

Leave a Comment