What are diffusion models? The magic behind AI art

Have you ever marveled at the incredible images generated by AI tools like DALL-E, Midjourney, or Stable Diffusion? These aren’t just clever filters; they’re the result of a powerful class of artificial intelligence known as diffusion models. At TechDecoded, we’re all about making complex tech understandable, and diffusion models are a prime example of AI’s creative potential.

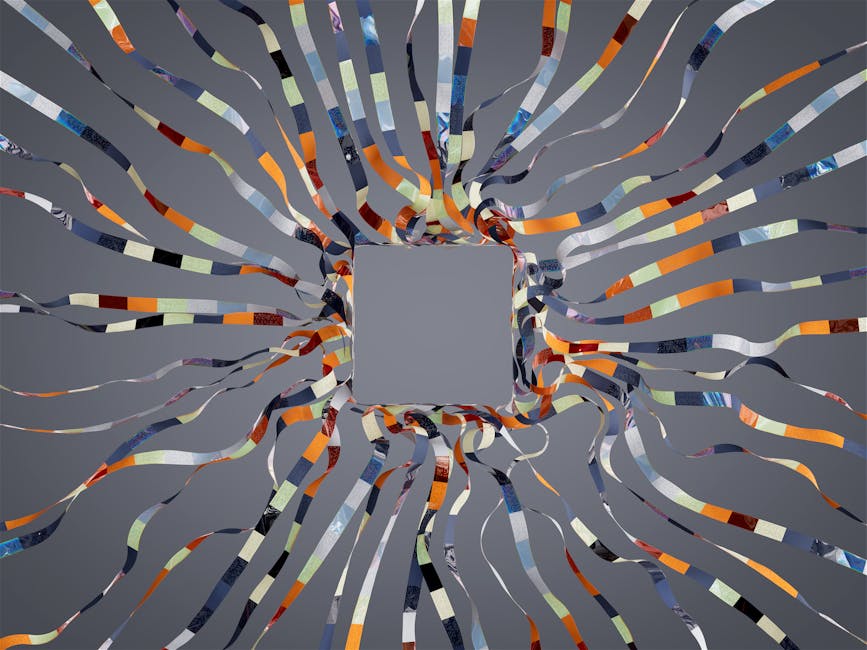

Simply put, diffusion models are AI systems designed to generate new data (like images, audio, or even video) by learning to reverse a process of gradual data corruption. Imagine starting with a clear image, slowly adding noise until it’s just static, and then teaching an AI to reverse that process, step by step, to reconstruct the original image – or even create a brand new one from scratch.

They’ve revolutionized the field of generative AI, making it possible for anyone to create stunning, unique visuals from simple text prompts. But how do they actually work their magic?

The magic behind the pixels: how diffusion models operate

The core idea behind diffusion models is surprisingly intuitive, even if the underlying math is complex. Think of it as a two-part process:

- Forward diffusion (the ‘noise’ part): This is the training phase. The model takes a clean image and gradually adds random noise to it over many steps, until the image is completely unrecognizable – just pure static. It’s like slowly blurring and distorting a photo until it’s just a mess of pixels. The model learns how this noise addition transforms the image at each step.

- Reverse diffusion (the ‘denoising’ part): This is the generation phase. Once trained, the model learns to reverse the noise-adding process. Given a noisy image (or even just pure random noise), it predicts and removes a tiny bit of noise at each step, iteratively refining the image until a clear, coherent picture emerges. This is where the creativity happens. By starting with different random noise patterns and guiding the denoising with a text prompt, the model can generate an infinite variety of images.

The model essentially learns the intricate patterns of how real-world images are structured, allowing it to ‘imagine’ and construct new ones that look incredibly realistic. It’s not just copying; it’s understanding and synthesizing.

A practical example: generating an image step-by-step

Let’s walk through how you might use a diffusion model to create an image:

- You provide a prompt: You type something like, “A futuristic city skyline at sunset, cyberpunk style, with flying cars and neon lights.”

- The model starts with noise: The AI begins with a canvas of pure random static, like TV snow.

- Iterative denoising: Guided by your prompt, the model starts its reverse diffusion process. In the first few steps, it might identify broad shapes and colors – perhaps a hint of orange for the sunset, dark blocks for buildings.

- Refinement and detail: With each subsequent step, the model removes more noise, adding finer details. Buildings become sharper, neon lights appear, and flying cars take shape.

- The final masterpiece: After hundreds or thousands of these tiny denoising steps, a fully formed, high-resolution image matching your prompt emerges.

This iterative process is what gives diffusion models their incredible flexibility and ability to produce such high-quality, detailed outputs.

Beyond images: other applications of diffusion models

While image generation is their most famous application, diffusion models are incredibly versatile and are being adapted for a wide range of tasks:

- Video generation: Creating short video clips from text prompts or existing images.

- Audio synthesis: Generating realistic speech, music, or sound effects.

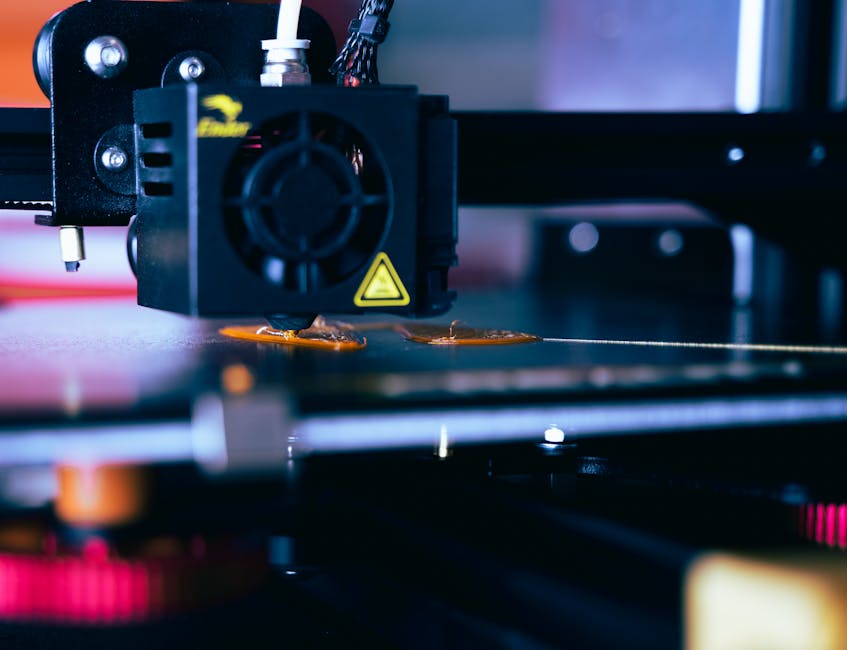

- 3D model creation: Producing intricate 3D objects and scenes.

- Drug discovery: Designing new molecular structures with desired properties.

- Data augmentation: Creating synthetic data to train other AI models, especially in fields where real data is scarce.

The potential for these models to transform various industries is immense, extending far beyond just digital art.

Why diffusion models matter for your future

Diffusion models are more than just a cool party trick; they represent a significant leap forward in AI’s creative capabilities. For you, this means:

- Unleashed creativity: Tools powered by diffusion models make high-quality visual creation accessible to everyone, regardless of artistic skill.

- New forms of expression: They enable entirely new ways to tell stories, design products, and visualize ideas.

- Practical applications: From marketing materials to scientific research, these models are becoming indispensable tools.

- Understanding the future: As AI continues to evolve, understanding foundational technologies like diffusion models helps you stay ahead in a rapidly changing tech landscape.

At TechDecoded, we believe that demystifying these powerful tools empowers you to harness their potential. Diffusion models are not just generating images; they’re shaping the future of digital creation and innovation, one denoising step at a time.

Leave a Comment