The seductive promise of artificial intelligence

Artificial intelligence has rapidly moved from science fiction to an integral part of our daily lives. From smart assistants managing our schedules to complex algorithms powering medical diagnostics and financial trading, AI promises efficiency, accuracy, and convenience. It’s easy to be captivated by its capabilities, to see it as an infallible oracle that can solve problems faster and better than any human. This allure often leads us down a path of increasing reliance, where we begin to trust AI’s outputs implicitly, sometimes without question.

At TechDecoded, we’re all about demystifying AI and helping you use it effectively. But part of that understanding involves recognizing its limitations and potential pitfalls. While a healthy dose of skepticism is often seen as a barrier to progress, when it comes to AI, we argue that over-trusting it poses a far greater danger than a cautious, even critical, approach.

When convenience breeds complacency

The primary danger of over-trusting AI lies in the complacency it can foster. As AI systems become more sophisticated and their interfaces more user-friendly, the temptation to outsource critical thinking and decision-making to them grows. We might stop scrutinizing the data, questioning the recommendations, or verifying the outcomes. This isn’t just about laziness; it’s about a fundamental shift in responsibility.

- Erosion of critical skills: Relying too heavily on AI for tasks like writing, analysis, or problem-solving can dull our own cognitive abilities. If an AI always provides the ‘answer,’ do we still learn how to formulate the question effectively?

- Blind spots and biases: AI systems are trained on data, and that data often reflects existing human biases, inaccuracies, or incomplete information. If we trust AI blindly, we risk perpetuating these flaws, amplifying them, and making decisions based on skewed perspectives without even realizing it.

- Lack of accountability: When an AI makes a mistake, who is responsible? If a self-driving car causes an accident, or an AI-powered medical diagnostic tool misidentifies a condition, the line of accountability becomes blurred, especially if human oversight was minimal.

Consider the recent instances where AI chatbots ‘hallucinated’ facts or provided dangerously incorrect advice. If a user trusts these outputs without cross-referencing, the consequences can range from embarrassing to genuinely harmful.

The power of informed distrust

Distrust, in this context, isn’t about rejecting AI outright. Instead, it’s about cultivating an informed skepticism – a critical mindset that recognizes AI as a powerful tool, but one that requires human guidance, validation, and ethical consideration. This ‘distrust’ empowers us to:

- Question the source: Understand where the AI’s data comes from and its potential limitations.

- Verify the output: Cross-reference AI-generated information with other reliable sources.

- Understand the ‘why’: Demand transparency from AI systems, where possible, to understand the reasoning behind their recommendations.

- Maintain human oversight: Ensure that critical decisions, especially those with significant ethical or safety implications, always have a human in the loop.

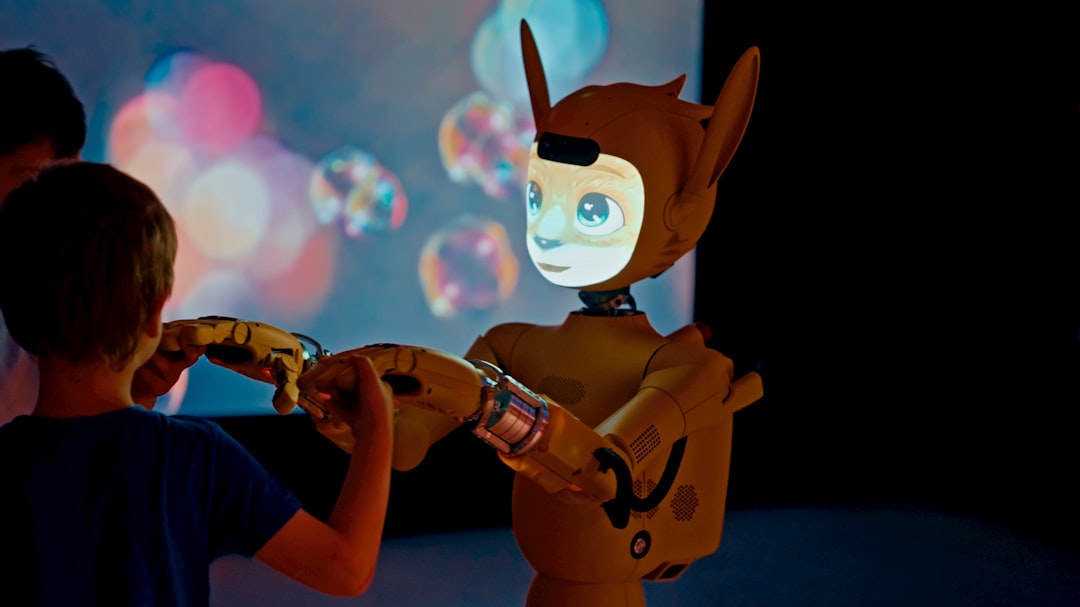

This approach transforms AI from an autonomous decision-maker into a powerful assistant, augmenting human capabilities rather than replacing them entirely. It’s about leveraging AI’s strengths – its speed, its ability to process vast amounts of data – while mitigating its weaknesses with human judgment and ethical reasoning.

Cultivating a balanced relationship with AI

The path forward isn’t about choosing between absolute trust and absolute distrust. It’s about finding a pragmatic balance, one that acknowledges AI’s immense potential while respecting its inherent limitations. At TechDecoded, we advocate for a framework of ‘critical engagement’ with AI.

- Educate yourself: Understand the basics of how AI works, its different types, and common pitfalls.

- Start small and test: Don’t deploy AI in critical systems without thorough testing and validation.

- Prioritize transparency: Whenever possible, choose AI tools that offer some level of explainability or allow for human intervention.

- Foster a culture of questioning: Encourage teams and individuals to challenge AI outputs and provide feedback.

- Remember the human element: AI is a tool designed to serve humanity. Its ultimate purpose should be to enhance human well-being, not to diminish human agency or responsibility.

By embracing a healthy skepticism, we empower ourselves to harness the true power of AI safely and effectively, ensuring that technology remains a servant, not a master, in our increasingly complex world.

A practical path forward for AI users

Navigating the AI landscape requires more than just learning how to prompt a chatbot or use a new tool. It demands a shift in mindset – from passive acceptance to active, informed engagement. For individuals and organizations alike, this means building processes that integrate AI as a powerful assistant, not an unquestionable authority. Always ask: ‘What if the AI is wrong?’ and have a plan for verification and correction. This proactive approach will not only safeguard against potential dangers but also unlock the true, responsible potential of artificial intelligence.

Leave a Comment